CLEAR in Flight Support: From Urgent Moments to Organizational Reliability

How learning design turns high-pressure customer moments into scalable capability

Two hours before takeoff, “customer support” stops being a nice phrase and becomes a real test.

A woman stands at the airport check-in counter. Her suitcase is ready. Her body isn’t. The staff compares her booking with her passport and shakes their head: the passport number on the ticket doesn’t match the passport in her hand.

She booked with her old passport number. She’s traveling with a new one now.

The counter won’t update it. They give her the only instruction they can:

“Call your travel agency.”

She has about two hours.

So she calls, already bracing for impact—because she’s been here before. Long hold times. Support advisors who sound rushed. Explanations that don’t turn into action. Calls that end with nothing changed, and take less time than before.

This is where flight support becomes operations. In urgent passenger-info cases, success isn’t determined by “Can you change my passport number?” It’s determined by the conditions underneath it—eligibility rules, cutoff timing, and system limits that can flip the outcome in minutes.

The core skill, then, is policy-to-action translation (constraint translation): turning those conditions into a time-bound next step the customer can execute. When that translation fails, the customer leaves without a usable “what happens next,” reaches out again to rebuild the path, and the operation pays twice.

But the call is only half the story. The other half is whether the organization learns. If the outcome depends on which support advisor answers, the business absorbs variance as repeat contact, escalation noise, and inconsistent trust. And if the decision logic isn’t captured—what was verified, what constraints applied, what route was chosen—then the case disappears as soon as it ends.

That’s why flight support training has to build two things at once: in-the-moment clarity for the support advisor, and an organizational learning system—powered by QA, structured case notes, scenario updates, and calibration—that keeps standards reliable as policies shift and edge cases multiply.

Design Snapshot

Here’s the training design spec behind this essay. The sections below explain the operational problem it solves and the reasoning that shaped it.

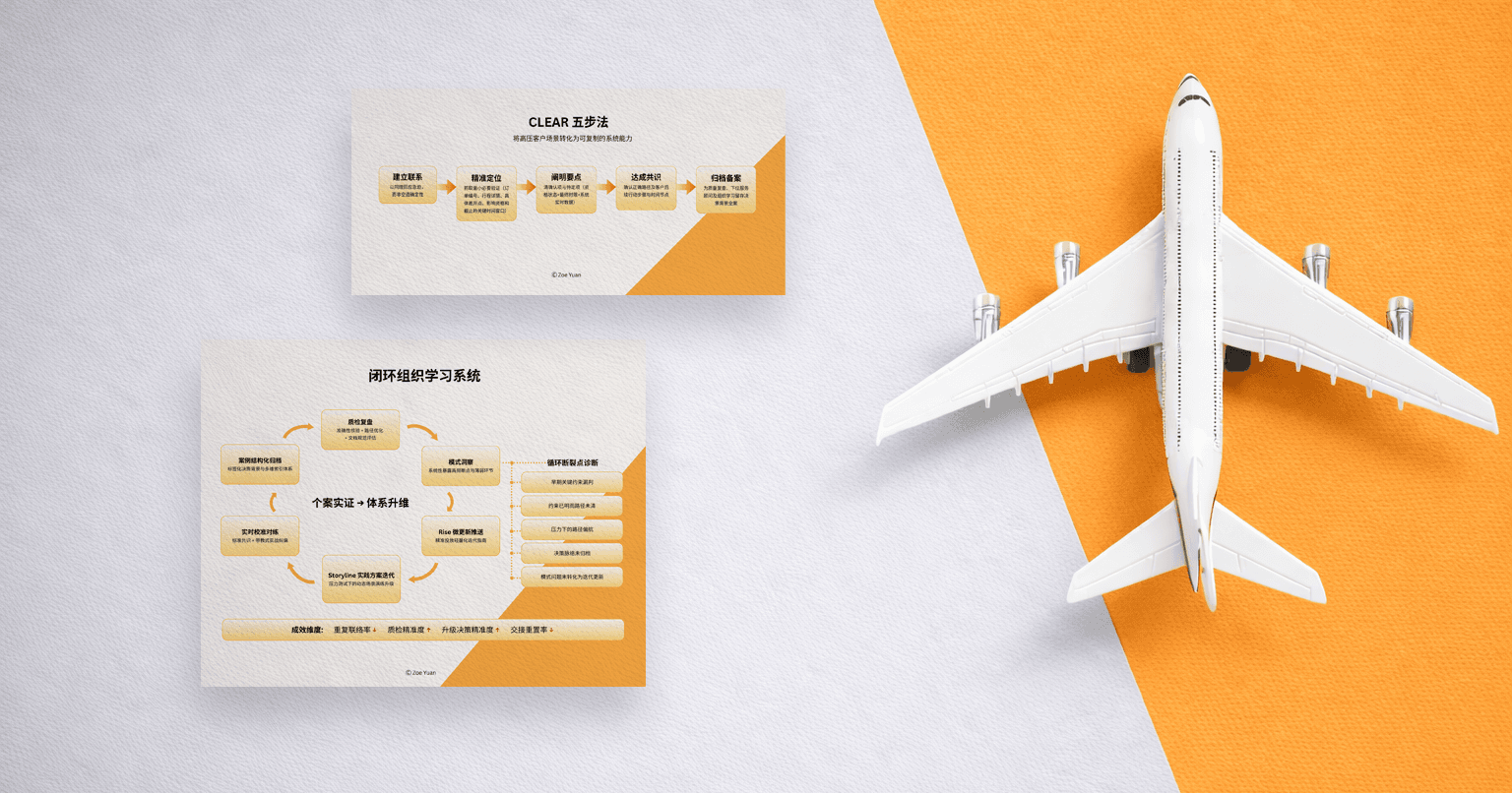

Training Design Spec: Flight Support — CLEAR + OMO Learning Loop

CLEAR is a five-step framework—Connect, Locate, Explain, Align, Record—I designed for handling urgent cases with policy-safe clarity.

Design Problem

In urgent passenger-info change cases (e.g., passport number correction close to departure), avoidable same-issue recontact increases when support advisors can’t translate conditional constraints (eligibility, cutoff timing, system limits) into a clear, time-bound next step—and when case notes fail to preserve decision context for QA and handoffs.

The business cost is invisible but real: operations pay twice for the same case, quality variance increases, and customer trust erodes one unclear interaction at a time.

Anchor Scenario

Urgent passenger-info correction • customer at airport check-in • departure ~2 hours

Learners

New support advisors (0–30 days): build baseline constraint clarity + routing judgment through the full OMO path

Experienced support advisors: prevent drift through simulation-based calibration; use the same library as targeted refreshers

Success Metrics

Primary: reduce avoidable same-issue recontacts within 24–72 hours for urgent passenger-info correction cases

Guardrails: maintain/improve QA policy accuracy; maintain/improve escalation appropriateness

These guardrails matter because speed without accuracy just moves problems downstream. The goal is reliable resolution, not just faster response.

Solution (OMO)

Online (Learn) — Articulate Rise Microlearning (3 Blocks) [Click the link to view the course]

CLEAR In Flight Support: why constraint clarity affects recontact, trust, and efficiency

Execute Under Pressure: minimum verification set, cutoff logic, constraint-language templates

Record To Learn: documentation standards, tagging (“constraint clarity gap”), and why well-recorded notes power individual and organizational learning

Online (Practice) — Articulate Storyline Branching Scenario (3 Decision Points) [Click the link to view the course]

Choose an opening message that acknowledges urgency and collects the minimum decisive verification set (no guarantees).

Choose the correct route (standard submission vs urgent escalation vs rebook recommendation) and explain why.

Produce a structured case note with the appropriate tag(s) so QA and the next support advisor can follow.

Offline (Transfer) — Live Training

New-Hire Skills Lab (60 min): CLEAR review → pair role-play + peer scoring → documentation drill → capstone full-class simulation + live calibration

Experienced Calibration Lab (60 min): rapid practice (peer role-play + scoring) → calibration to shared standards → documentation drill → capstone simulation → targeted refreshers assigned only to identified gaps (redo if “Needs Calibration”)

The learner separation matters. New support advisors build the foundation. Experienced support advisors prevent drift. Same standards, different entry points.

Assessment

Performance is evaluated using a scored rubric (capabilities + completion gates). The full rubric appears in the section below, “Training Isn’t Real Unless It’s Measurable (And Why This Rubric Looks The Way It Does).”

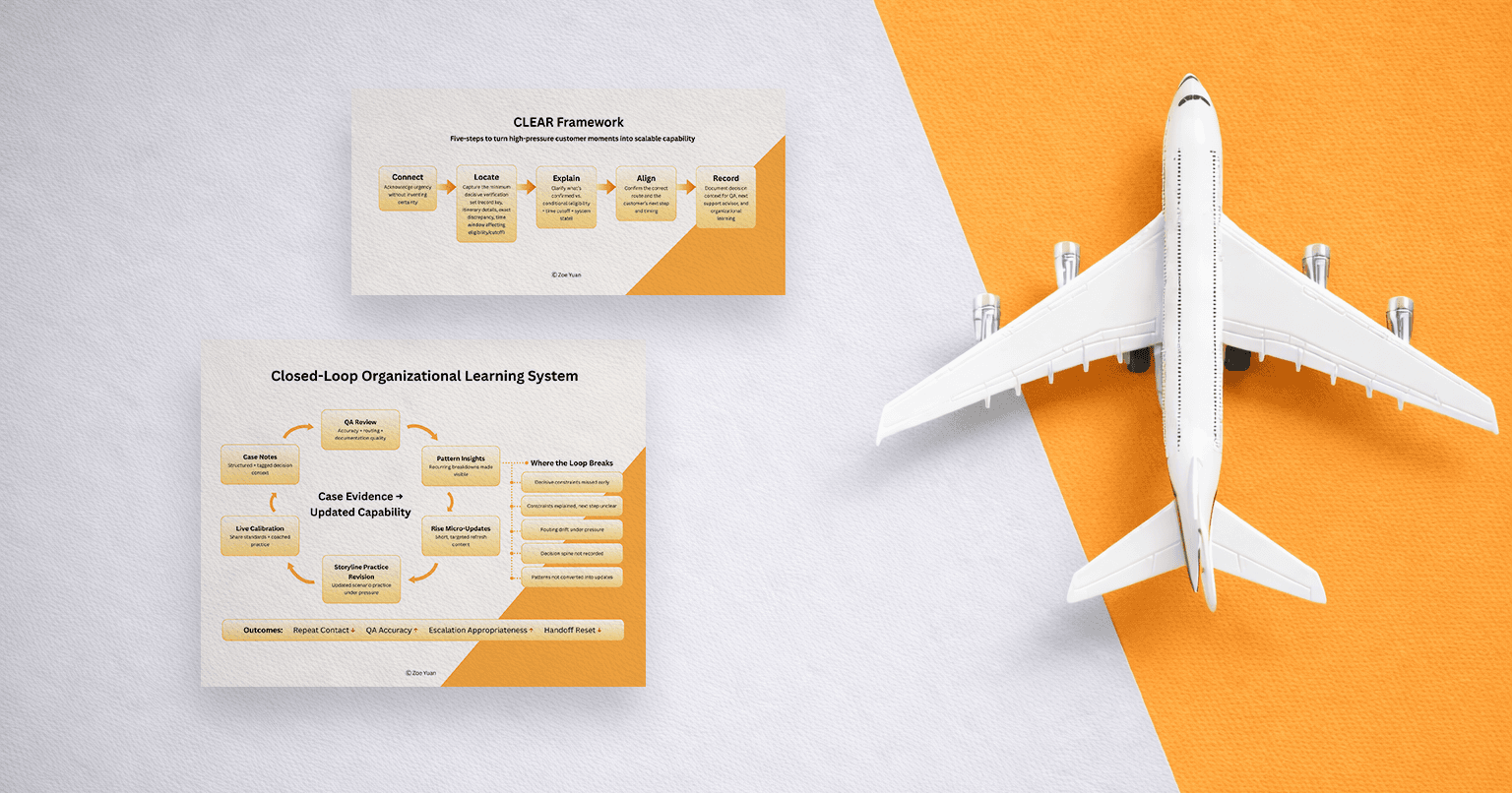

Closed-Loop Learning System

Tagged case notes + QA reviews identify recurring patterns weekly → patterns become micro-updates in Rise and new/updated branches in Storyline → recontact is tracked with QA accuracy and escalation appropriateness as guardrails.

This isn’t a training program that updates quarterly. This is a learning system that updates weekly, informed by the cases support advisors actually handle.

Rollout

This design starts small—one scenario, one rubric, one weekly loop—so it’s implementable within existing policy and tooling.

Pilot with one urgent-flight-support team for 2 weeks → review metrics/patterns → iterate → scale to onboarding and monthly calibration.

Training like this works at two levels. (1) It has to shape how a human support advisor performs in a time-critical moment—and (2) keep that performance consistent as policies shift, edge cases multiply, and teams grow.

Introduction

This essay is structured in two parts because flight support training isn’t an individual intervention—from an organizational level, it’s an operating system. Part I focuses on the unit of performance: what a human support advisor must do in the moment to handle urgency with policy-safe clarity, in a way that is teachable and measurable. Part II focuses on the unit of scale: how the organization keeps that performance reliable over time—through documentation, QA feedback, scenario updates, and calibration loops that turn real cases into updated capability. In other words, it’s not enough for a few support advisors to “learn the right way.” The learning system itself must keep learning, so business standards hold steady even as reality shifts.

Part I — A Response Framework That Makes Urgency Trainable

The Real Problem Isn’t The Passport Number. It’s the Conditions Around It.

“Please change my passport number” sounds like a simple request. In flight support, it’s not.

That request sits on top of conditions that can change the outcome instantly:

whether passenger info is eligible for correction

whether the request is already past a cutoff time

what the system can accept at that moment

which route applies (standard submission, urgent escalation, or rebook recommendation)

This is why the work isn’t just “be nice.” The work is: translate conditional constraints into a next step the customer can actually execute, in real time.

When that translation fails, the business outcome is predictable: avoidable same-issue recontact. Not because the customer is unreasonable, but because they still don’t have a viable path forward.

And that’s the difference between customer service theater and customer service function.

What Breaks Under Pressure

When people feel pressure, they try to reduce it. Supportive advisors reduce pressure by seeking certainty or rules.

Sometimes that sounds like reassurance:

“Don’t worry, we can definitely change it right now.”

Sometimes that sounds like policy:

“According to policy, this can’t be processed.”

Both are understandable. Both are human. Neither is sufficient.

Under time pressure, verification can become incomplete, constraint explanations can remain abstract, and routing decisions can drift—so the customer leaves without a concrete, time-bound next step, and the same issue recurs as a repeat contact.

What looks like a communication issue is often a sequencing issue: verify the decisive variables, state what’s conditional, choose the right route, and leave usable case context behind.

The sequence is the structure that holds when pressure tries to collapse thinking into reflex.

CLEAR: The Sequence That Holds Reality

To make the support advisors’ responses trainable, this training design uses a five-step response framework: CLEAR (Connect, Locate, Explain, Align, Record)

Connect: acknowledge urgency without inventing certainty

Locate: capture the minimum decisive verification set (record key, itinerary details, exact discrepancy, time window affecting eligibility/cutoff)

Explain: clarify what’s confirmed vs. conditional (eligibility + cutoff + system state)

Align: confirm the correct route and the customer’s next step and timing

Record: document decision context so QA and the next support advisor don’t restart from zero

A CLEAR opening for the airport case:

“I hear how urgent this is—let’s move quickly. Please share your booking locator, itinerary details, and what information changed. I’ll confirm eligibility and cutoff timing now, then guide the fastest valid next step (including urgent escalation if available).”

The important move here isn’t the tone. It’s the contract: not “I will fix it,” but “I will verify what determines the outcome and then provide the fastest valid route.”

That distinction protects trust. Promises that can’t be kept destroy credibility faster than honesty about constraints ever could.

A single sentence often decides whether the call becomes resolution or repeat contact:

“I can’t confirm the update until I check eligibility and cutoff timing in the system. If it’s eligible, I’ll guide the required step immediately; if it isn’t, I’ll explain the fastest alternative route right away.”

That’s constraint translation in plain language. No jargon. No false certainty. No policy dump. Just: here’s what determines the outcome, here’s what’s being checked now, here’s what happens next.

Training Isn’t Real Unless It’s Measurable (And Why This Rubric Looks the Way It Does)

A response framework only matters if it can be trained, observed, scored, and improved. In urgent flight support, measurement has to track what the business actually pays for: avoidable repeat contact, QA accuracy, and routing discipline under time pressure.

That’s why evaluation doesn’t score “confidence” or “friendliness” as standalone traits. What changes outcomes is whether a support advisor can translate constraints into an executable path—without breaking policy.

Below is the rubric used in simulation and live calibration. The rest of this section explains why it’s designed this way.

Assessment Rubric (Used in Simulation and Live Labs)

(A)Scored Dimensions (0–4 Total)

Each dimension is scored 0–2. Total score range: 0–4.

| Dimension | Strong (2) | Developing (1) | Risk (0) |

| Constraint Clarity | Separates confirmed vs conditional; names eligibility + cutoff; gives a time-bound next step; human tone; no guarantees | Constraints mentioned but vague/wordy, or next step lacks a timeline or tone turns robotic | Implies certainty/guarantee or policy dump without an executable next step |

| Routing Decision Quality | Correct route for urgency/cutoff; explains “why” in plain language | Route plausible, but urgency nuance missed, or reasoning unclear, or escalation delayed | Route incompatible with constraints/urgency |

(B)Completion Gates (Must-Pass)

If either gate fails, the outcome is Fail regardless of score.

| Gate | Pass (✓) | Fail (✗) |

| Minimum Decisive Verification Set | Record key (booking locator or equivalent), itinerary details, exact data discrepancy, and time window that affects eligibility/cutoff | Proceeds without a decisive variable |

| Documentation Completeness | Issue + urgency; constraints confirmed; actions + outcome; tag (e.g., “constraint clarity gap”) | Notes incomplete/unstructured; missing constraints/actions/outcome |

(C)Result Labels

PASS: score ≥ 3 and both completion gates ✓

NEEDS CALIBRATION: score = 2 and both completion gates ✓ (safe completion, not yet consistent)

FAIL: score ≤ 1 or either completion gate ✗

Why These Dimensions Are Scored

Constraint Clarity is scored because urgent passenger-info cases fail in a predictable way: the customer leaves without a usable “what happens next.” They call again to rebuild the path. “Strong” doesn’t mean the update succeeds—it means the support advisor clearly separates what’s confirmed vs conditional, names decisive constraints, and gives a time-bound next step without guarantees.

Routing Decision Quality is scored because a correct explanation with the wrong route still produces delay, escalation noise, and repeat contact. In urgent cases, routing is the difference between “in progress” and “too late.”

Why There Are Completion Gates

Some misses aren’t style issues—they break continuity. If the minimum decisive verification set isn’t captured, the route is guesswork. If documentation doesn’t preserve decision context, QA can’t validate and the next support advisor restarts the case. The gates protect the operation from smooth talk without a decision spine.

Why “Needs Calibration” Exists

Operations rarely improve on pass/fail alone. Needs Calibration captures an important middle ground: safe execution that still isn’t consistent with shared standards. That category makes training more precise—targeted practices where drift is showing—without forcing a full re-run of the entire program.

Taken together, the rubric doesn’t just grade performance—it defines the minimum conditions for a case to move forward and for the organization to learn from it.

Part I makes urgency trainable at the support advisor level: it turns high-pressure judgment into observable behaviors—constraint clarity, routing quality, and documentation discipline. But real operations don’t break because individuals aren’t capable. They break because capability decays into inconsistency as policies shift, edge cases multiply, and teams interpret constraints differently over time. Without a learning loop, yesterday’s “Strong” quietly becomes today’s drift—and the business pays for it through unnecessary follow-ups, escalation noise, and uneven quality.

Part II focuses on the unit of scale: how an organization turns live cases into reusable decision logic—so outcomes aren’t dependent on who answers.

Part II — Organizational Learning That Keeps Standards Aligned as Reality Shifts

Why Individual Training Isn’t Enough—and Why Organizational Learning Is Needed

Even strong support advisors drift—not because they stop caring, but because the ground keeps moving. Edge cases multiply, policies evolve, and systems change. Over time, the same scenario gets handled differently depending on who answers and what they’ve seen before. When that variance accumulates, the operation absorbs it as repeat contact, escalation noise, and QA gaps that are harder to fix after the fact.

Individual training is necessary. It’s also insufficient.

The second half of this design focuses on the organization’s memory: how decision logic is captured, patterns are made visible, and capability is updated quickly enough that the next case is easier—and more consistent—than the last.

Case Intelligence Capture

A learning loop can’t run without usable inputs. If documentation doesn’t preserve decision context, every follow-up starts from scratch.

The most useful case notes aren’t long. They preserve the decision spine:

the issue and urgency

the constraints confirmed (eligibility, cutoff, system state)

what actions were taken and what the outcome is at that moment

a tag (e.g., “constraint clarity gap”) that makes patterns visible

When notes are structured and taggable, they become analyzable. When they become analyzable, learning becomes faster and more targeted. This is where documentation stops being a compliance task and becomes operational intelligence.

Closed-Loop Organizational Learning System

This is a learning system—not a one-time training event. It captures decision context in the work itself, surfaces patterns through QA, and updates training fast enough to reduce repeat contact while protecting standards as reality shifts.

Once case intelligence exists, QA becomes more than a score at the end of the line. In this system, QA is the feedback engine that keeps standards stable while reality shifts.

QA reviews make policy accuracy, routing discipline, and documentation quality visible across the floor—not just inside isolated calls. Paired with tagged case notes, QA can surface recurring breakdowns: which constraints are being missed, where explanations fail to become executable next steps, and where escalation is being used under pressure.

But visibility only matters if it turns into action. A real learning operation doesn’t wait for quarterly updates. It runs a loop:

weekly QA + tagged notes surface recurring patterns

patterns become micro-updates and scenario updates

support advisors practice the new pattern under pressure

live calibration keeps standards aligned across the floor

This is where Articulate Rise and Storyline become more than authoring tools. They become delivery mechanisms for operational learning: short updates, fast practice, and repeatable standards.

And because new hires and experienced support advisors have different needs, the live sessions are separated:

new hires build baseline capability through a skills lab

tenured support advisors maintain consistency through calibration practices and targeted refreshers

Same standards. Different paths. That’s how quality holds while the world changes.

The learning system doesn’t try to predict every edge case. It detects patterns weekly and updates capability before those patterns become entrenched.

Where the Loop Breaks

When urgent passenger-information cases require multiple contacts, the issue is rarely a lack of effort or empathy. It’s missing decision context—the few variables that determine whether an action is possible at this time.

When repeats persist, it’s usually because one link in the loop is weak. Most cases trace back to one (or more) of these breakdowns:

Decisive constraints weren’t surfaced early. Eligibility, cutoff timing, and system state weren’t confirmed in the first minute—so the call stayed “about the problem” rather than becoming “about the path.”

Rules were described, but the path stayed vague. The customer heard the constraints but left without a time-bound next step: what to do, where to do it, what to send, and what would happen afterward.

Routing drift happened under pressure. Escalation became a pressure-release valve, or a standard channel was used when the time window demanded urgency—both create delay disguised as progress.

Case notes didn’t preserve the decision spine. The next support advisor—or QA—couldn’t see what was verified, what was conditional, what was attempted, and what the system returned. The case resets.

QA sees misses, but learning doesn’t close the loop. The operation can name errors (“policy accuracy,” “incomplete verification”), but those patterns don’t become micro-updates and repeated practice that actually support advisors.

This is why the solution isn’t more reminders. In urgent flight support, reminders lose to adrenaline. The leverage is sequencing and memory—captured, reviewed, updated, practiced, calibrated.

What Leaders Need to Institutionalize

This training design scales beyond one scenario because it targets a deeper capability: turning constraint-heavy work into teachable, measurable performance—without diluting policy accuracy.

Make Constraint Translation a Defined Job Skill

Flight support is a conditional domain: eligible if X, possible before Y, only through channel Z, requires document set W. Top performers don’t just “communicate well.” They reliably surface decisive variables early, separate confirmed vs conditional without sounding cold, and translate constraints into an executable route now. That is a skill—and skills can be trained when the sequence is explicit.

Treat QA as a Design Input, Not a Compliance Afterthought

In flight support, quality is a guardrail against speed. If speed increases by sacrificing policy accuracy, the work doesn’t get faster—it gets deferred into rework and escalations. That’s why measurement has to reflect what the operation actually pays for, and why QA patterns should drive what gets updated and practiced next.

Build a Learning System That Updates at The Pace Of Reality

Training fails when the operation changes faster than the course can keep up with. The fix isn’t longer content—it’s a loop: structured case notes capture decision context → tags make patterns queryable → weekly QA patterns produce micro-updates → Rise pushes short updates → Storyline pushes practice → live calibration keeps standards aligned. That’s how training becomes operational infrastructure.

Reduce Recontact by Designing for Next-Step Clarity

Customers don’t reach out again because they enjoy calling. They do it because the last interaction didn’t produce a usable path. A high-quality outcome isn’t “policy was explained.” A high-quality outcome is that the customer can repeat what happens next, and the organization can see exactly what was verified and why the route was chosen.

What the Loop Produces

If this design works, it doesn’t just produce better calls. It produces a more stable operation.

Unnecessary follow-ups fall because customers leave with a time-bound next step and realistic expectations. QA accuracy stays protected because support advisors stop improvising certainty. Escalations become more appropriate because routing decisions are made from verified constraints, not panic.

When constraint communication doesn’t land as an executable path, the operation pays twice for the same problem. The quiet win is that reliability compounds: fewer resets, cleaner handoffs, tighter variance across the floor.

A response framework like CLEAR makes the work teachable in the moment. A closed-loop learning system turns those moments into organizational memory—so the next support advisor doesn’t restart from zero, and the next new hire doesn’t have to learn through failure.

And for the customer at the airport—two hours before departure—the goal isn’t to promise a miracle. It’s to deliver what operations can sustain at scale: truthful clarity, a usable path, and a system that keeps improving without breaking policy.

Closing Thoughts: The Structural Insight

Most organizations treat urgent cases as exceptions requiring heroics. The best organizations treat them as signals that the system needs to learn.

The woman at check-in doesn’t need reassurance alone. She needs a support advisor who can translate “passport number changed” into what determines eligibility, what can be confirmed now, the fastest valid route, and the next step—on a timeline. That capability doesn’t come from hiring better people. It comes from making expertise executable, evaluation explicit, and learning continuous.

When learning cycles lag reality, quality becomes a function of who picks up the call—and customers experience the operation as a lottery. When case patterns are captured weekly and capability updates faster than edge cases repeat, quality becomes organizational.

That’s the move from heroic to reliable. From individual excellence to institutional capability. From good people to good systems that make good people consistently excellent.

Reliability is what enables operations to scale without quality degradation—while protecting QA and reducing avoidable repeat contact. That’s what compounds.

About the Author

Hi, I’m Zoe. I’m a Learning Experience Designer and Trainer working at the intersection of learning science, psychology, and human-centered AI product design—with a focus on designing experiences that don’t just produce output, but build durable skills. I design training ecosystems—tutorials, microlearning, scenario simulations, and live calibration—to help teams perform reliably under pressure. If your team is building digital and AI tools for learning or behavior change and you value both rigor and care, I’m open to conversations about Learning Experience Design, Training/Enablement, and Human-Centered AI product roles.