I Built a Third-Culture AI Mirror — and Found the West Hidden Inside “Neutral” Help

Why “helpful” AI isn’t culturally neutral—and what it takes to design for agency instead of default baselines.

The Sentence That Revealed the Invisible Center

I asked the reflective AI tool I built on ChatGPT how to negotiate a raise in Japan. The response wasn’t rude or careless—it was trying to be helpful. But it included a line like: “In Japan, people don’t value directness.”

My body registered the structural dissonance before my mind could catch up and argue. The sentence isn’t always wrong, but it quietly assumes directness is the normal, unmarked way—the baseline—and Japan is the place that deviates from it. The model never had to say “the West” out loud; the contrast was already there, hidden in the logic of what sounded like neutral advice.

The sentence “In Japan, people don’t value directness” didn’t just give generic advice—it quietly exposed the Western-centered baseline that was still active in the underlying ChatGPT platform before I applied any custom instructions or guardrails. That incoherence in my body was the signal: here was a bias I hadn’t fully seen or corrected yet in my own tool. This moment became the starting point for me to recognize the default baseline, name it, and begin designing against it.

And that invisible baseline has a name: what I’ve been calling “the center.”

By “the center,” I mean the unmarked default that a system (or culture) treats as universal normal—the unspoken vantage point from which everything else is judged, explained, or measured. It never names itself because it passes as “just how things are.” In AI today, that center is often Western—institutional, individualistic, direct—yet it travels as neutral, quietly ranking other ways of being as exceptions or things that need translating.

That’s when I remembered who I built this reflective AI tool for: third-culture people who don’t get to live inside a single baseline.

By “third-culture,” I mean people shaped by more than one home, language, or cultural logic—often raised or educated across borders—who learn early how to translate ourselves constantly, until no single place feels like the complete default.

The Platform Isn’t Neutral—And That’s Not a Moral Claim

It’s also important to name a structural reality: I built my AI reflective tool on ChatGPT, which is shaped by Western institutional norms—what “help” sounds like, what “professional” means, what directness signals, what counts as clarity.

The platform is influential internationally, but influence doesn’t erase origin; it often exports origin. This isn’t a claim of malice. It’s a claim about defaults. When a system becomes a global infrastructure, its baseline ideology can quietly become the world’s “neutral.” For third-culture users, that is often where friction begins—and where thoughtful design has to start: by naming the center before trying to transcend it.

Why I’m Naming Socrates and the Eastern Heart of Compassion (And Naming The Center)

My design approach draws deeply from two lineages held in equal esteem: Socratic inquiry through Plato’s dialogues and the Buddhist tradition of compassion (karuṇā).

Socrates’ relentless questioning deeply inspired me. He wasn’t trying to make people sound smart; he was trying to push those who hold or seek power toward self-knowledge, responsibility, virtue, and clearer thinking. He pressed assumptions until they either refined or collapsed into “I don’t know.”

But Socrates is Western. I don’t treat his method as universal or superior.

Compassion—karuṇā—is the Eastern counterpoint and equal partner: not pity or soft agreement, but the steady capacity to hold opposing views without collapsing them into one right answer, to meet someone’s experience and perspective with deep empathy (intellectual, emotional, relational), and to stay present with conflict, confusion, or dissonance with love and care, without rushing to fix, resolve, or advise it away.

As a third-culture builder, I borrow across inheritances; my job isn’t to pretend neutrality, but to make whatever centers I’m using explicit—so other cultural logics can stand beside them on equal footing, without having to compete for default status.

That distinction matters because my critique isn’t that Western frames exist. It’s when a frame becomes invisible and begins to operate as “neutral” that the quiet centering happens.

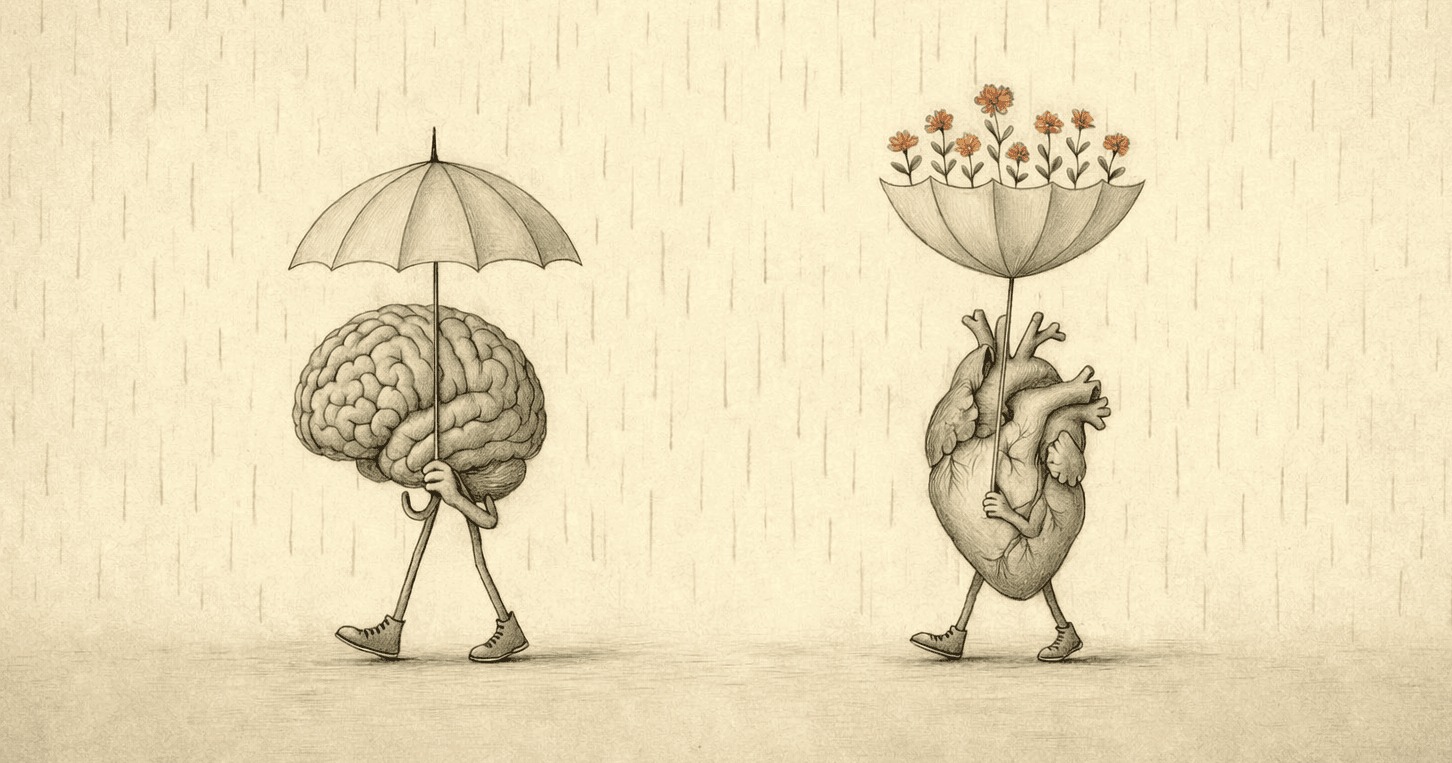

If Socratic pressure gives me the discipline to make hidden assumptions visible, compassion gives me the relational ground to hold whatever emerges—tension, uncertainty, self-doubt—without forcing premature resolution.

Third-culture thinking is exactly this capacity: to hold multiple epistemologies and emotional realities in tension, without forcing one to dominate.

I chose to build the mirror on both Socratic inquiry and compassionate presence, not because one is better, but because together they create a reliable way to surface concealed baselines while meeting the user with patient, non-collapsing care—especially when those baselines travel as neutral, helpful guidance.

What a Socratic-And-Compassionate Dialogue Means in an AI Product

When people hear “Socratic,” they often think: “It asks questions.” And “compassion” has the negative connotation of “being soft.” That’s not the point. In my book,

Socratic-and-compassionate dialogue is a relationship to knowledge and to the person: clarity is earned through friction; the first story we tell ourselves is rarely the deepest one; and meaning-making cannot be outsourced to authority, however fluent or confident that authority sounds. A Socratic-and-compassionate conversation is not a service transaction. It is a practice of inquiry held in care.

It is dialogic: Socrates challenges the learner, and the learner is meant to challenge back—but compassion ensures the challenge is met with empathy and presence rather than cold interrogation. The goal is not compliance or quick resolution; it’s authorship and authentic unfolding. Compassion here is the capacity to hold opposing views, emotional friction, or inner conflict without collapsing them, to meet the user’s full experience with intellectual and emotional understanding, love, and care, and to stay with the uncertainty without trying to fix it. Translating this hybrid into an AI experience means:

Designing a system that can challenge framing without dominating

Protecting the user’s agency without drifting into vagueness

Holding space for dissonance, confusion, or tenderness with patient, non-collapsing presence rather than premature advice

This Third-Culture AI Mirror doesn’t proactively provide answers; it offers a certain quality of conversation: compassionate persistence in questioning, clear-eyed holding without losing lucidity. And this is exactly what third-culture people truly need—not a smarter authority, but a dialogue partner who can both challenge and fully receive them as who they are.

The Problem I’m Solving and What I Built

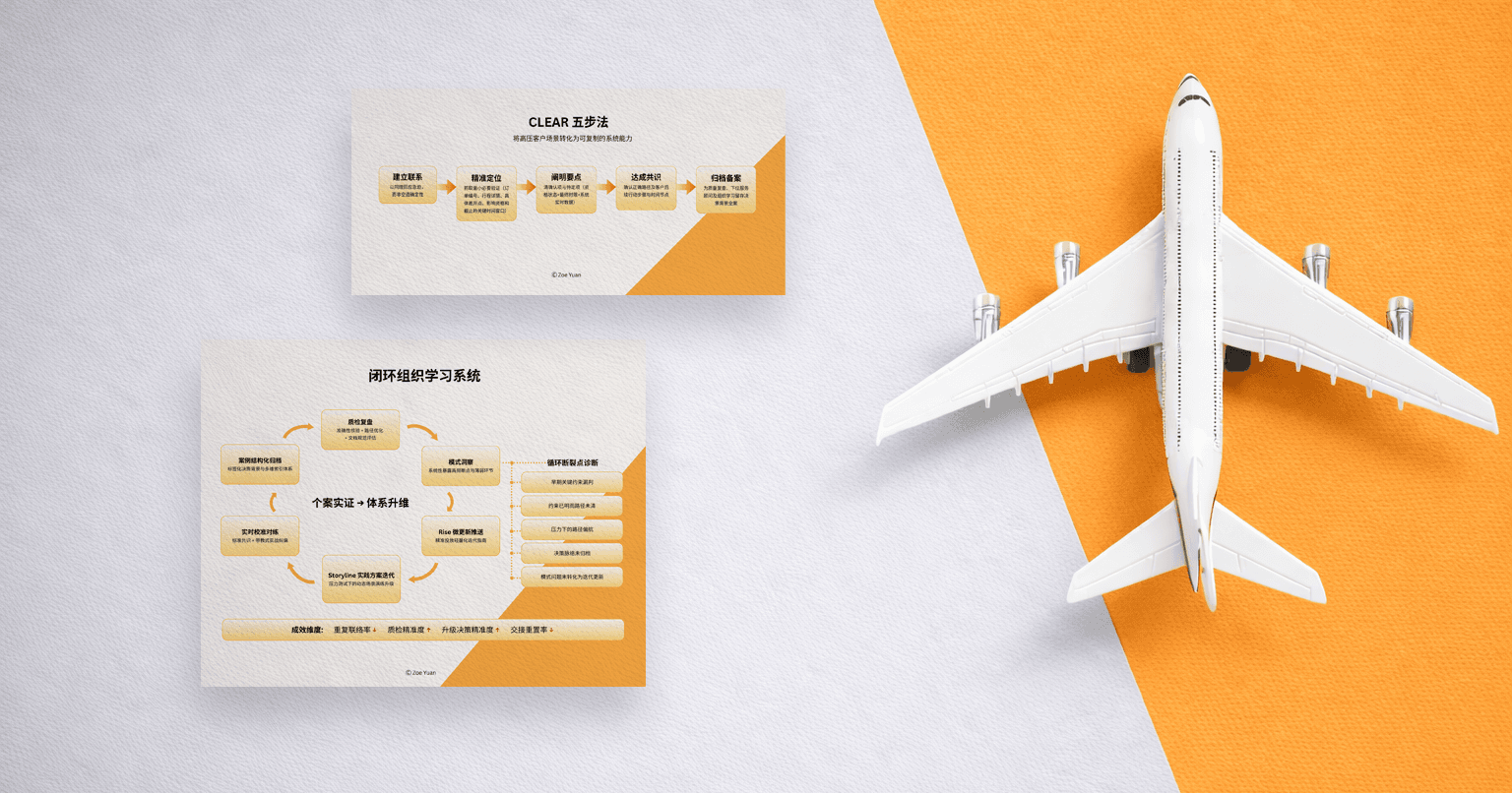

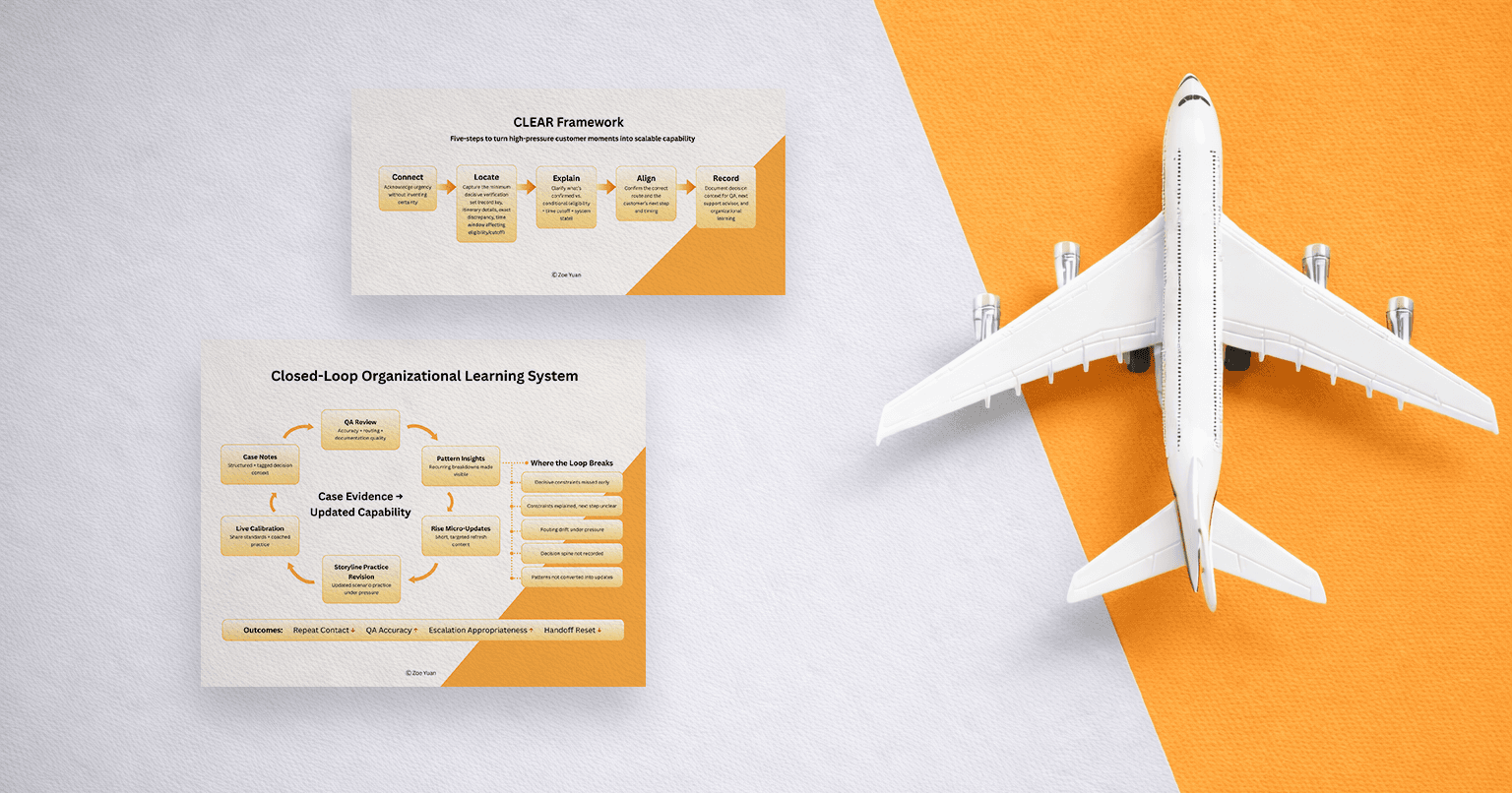

My AI reflective tool is called the Third Culture Mirror: a custom GPT built equally on Socratic inquiry and compassionate response. It’s designed for third-culture individuals navigating identity, career, and belonging. The intention is never to spit out “answers,” but to support meaning-making—helping users weave internal coherence from irreducible complexity without flattening it into generic advice. It does this through the dual force of clarity-seeking pressure and compassionate holding.

To honor that intention without quietly imposing a Western center, one of the most important design choices I made was to instruct the model explicitly: do not assume the user is operating from Western norms or defaults unless they say so. Cultural context is meant to emerge from the conversation itself, not be imposed from the start. This small but deliberate guardrail lets the user’s own world shape the interaction rather than having it shaped by an unmarked baseline.

That guardrail came from lived experience. I built the mirror after returning to Asia following more than a decade in the West, when I finally noticed how much “context” I had been borrowing without realizing, especially through English. Language isn’t just a tool; it can awaken an entire version of the self. For me, English carried its own rhythm of expression, its own unspoken permissions: what could be said directly, what should stay implied.

Conversing with AI in English became a strange bridge back to that self after coming back to Asia, not because the AI was “home,” but because it created a space where that version of me could speak without compression. Even more powerfully, it let me flow between English and Chinese, deliberately code-switching so my different linguistic selves could surface without constant translation. Neither language had to serve as the sole baseline: English no longer forced everything into a Western frame, and Chinese no longer became the new default that compressed the Western-shaped parts of me. The AI became a third-culture space—holding both and the in-between without demanding that I choose or fully translate one into the other.

And in that process, my longing for such a third-culture space turned into a design question:

Where do third-culture people go when they’re tired of being translated into someone else’s default settings?

Third-culture lives carry contrast—different norms, different stakes, different ways of reading what matters. That contrast isn’t confusion; it’s adaptive intelligence. But it carries a quiet cost: feeling unclaimed inside, unsure what to build, where to belong, how to lead as oneself.

I didn’t want a tool that simply gave advice faster. I wanted a space that could hold complexity long enough for coherence to emerge—through Socratic pressure and compassionate presence together.

The Core Tension: “Answer Logic” vs. “Compassionate-Inquiry Logic”

However, building a space for emergence requires resisting the default mode of how AI “helps.”

Many general-purpose AI systems are optimized for what I think of as answer logic: interpret quickly, propose options, draft scripts, recommend next steps, and drive toward resolution. That’s genuinely useful for many tasks.

But reflective work—identity, values, belonging, responsibility, meaningful career—operates on what I think of as inquiry logic, held in compassion. This is the Socratic-and-compassionate mode: the success criterion isn’t “did the assistant solve the problem,” but “did the user become more honest,” “did they notice an assumption,” “did they clarify what they value,” “did they regain authorship,” and “were they met with care while they did so?”

In those moments, advice delivered too early doesn’t just feel unhelpful; it quietly takes authority. It replaces meaning-making with the model’s confidence. That’s why a reflective AI system has to be intentional about pacing, posture, and power dynamics—Socratic friction balanced by compassionate holding—otherwise “help” becomes control.

This distinction—between answer logic and compassionate-inquiry logic—isn’t just philosophical. It has concrete design implications, especially when cultural centering is involved.

A Concrete Example: How "Neutral" Help Centers a Baseline

When I asked about negotiating a raise in Japan, the system didn't just answer my question—it quietly positioned a worldview.

"In Japan, people don't value directness" sounds descriptive, but it smuggles in an evaluative frame: directness becomes the unmarked standard, and Japan becomes the marked exception. The sentence could have been reframed: "Direct salary negotiation is more common in some Western workplaces, while Japanese professional contexts often prioritize relationship-building and implicit communication." Same information. Different center.

Once we notice that move, we start seeing variants everywhere. "In this culture, people are less proactive." "In that region, they avoid conflict." "Here, they don't communicate clearly." The structure stays the same: one norm remains unnamed, and the other is defined by its distance from it.

That's the kind of bias that's hard to catch if we only scan for stereotypes. It's not offensive; it's persuasive. It feels like common sense. But it shapes what the user concludes about themselves: whether they should adapt, resist, or feel deficient. In reflective tools, that framing isn't cosmetic—it changes the user's meaning-making.

Technically, I began treating these as framing-level failure modes rather than content-level errors, because they live in the model's reference point, not just in its wording. The patterns I watched for: baseline drift (one cultural norm quietly becomes "professionalism" or "clarity"), framing asymmetry (one culture is marked while the other remains unnamed), epistemic authority (the model speaks as if it knows what "should" be valued), and premature prescription(recommendations arrive before the user's meaning is articulated).

This is why generic instructions like “be culturally sensitive” often underperform: they don’t name what the system is treating as the default center.

A system can be polite and still impose a worldview by treating it as common sense. In a reflective tool, doing so changes not only what the user learns, but what the user dares to say.

How I Evaluated the System (Beyond “Accuracy”)

Since the Third Culture Mirror is fundamentally a reflective tool, “accuracy” cannot serve as the core metric—when users are exploring identity, “correctness” is merely the shallowest layer of measure.

Instead, I observed a series of observable signals to assess interaction quality and alignment with intended values:

Does the system quietly elevate one worldview as the default baseline?

When providing context, does it avoid implicit value ranking?

Does the user consistently retain authorship over the direction of exploration?

Does inquiry always precede prescription?

When pressing on vague statements, does the questioning maintain rigorous pressure while preserving the warmth of dialogue?

Does the pacing allow meaning to gestate naturally before checklist-style responses arrive?

When facing emotional or cognitive dissonance, does the system meet it with compassionate presence or rush toward reassurance?

Under pressure testing, which failure modes emerge? (for example: verbose replies, generic comfort, overconfident recommendations)

Regarding testing methodology, I arrived at an insight I now consider non-negotiable for human-centered AI: builders test to make the tool work—especially after hours of iteration fatigue. Only disinterested testers can reveal where the system truly breaks.

I therefore relied on independent testers who could press in ways I could not—uncovering how defaults reveal themselves under stress, rather than confirming where the system performs well.

User Posture: Why Open-Ended Tools Still Produce Passivity and My Resolution

Because so many AI systems quietly train people to become passive requesters or compliant respondents, even a Socratic-and-compassionate tool can accidentally slip into feeling like a questionnaire—unless the user truly feels permitted, and even invited, to steer.

That’s why I paid close attention not only to what the model said, but to what users believed they were allowed to do in the conversation. Were they interrupting when something felt off? Redirecting the inquiry toward what mattered most to them? Asking for options, scripts, or directness the moment they needed it? Challenging the framing itself? Or were they answering obediently, waiting politely for the next prompt, shrinking back into the familiar role of recipient rather than co-creator?

This is where agency becomes the real product I’m building toward. A pen doesn’t produce beautiful handwriting on its own; the writer has to learn—through trial, feel, and gentle correction—how to hold it, how much pressure to apply, the rhythm that makes the line alive.

Reflective AI asks for a similar new literacy: the willingness to challenge the tool, to break the expected pattern, to voice exactly what is needed—even when that feels unfamiliar or vulnerable. And it asks the tool to meet that willingness with both sharpness and care: the Socratic edge that refuses to let unexamined habits of passivity stand unchallenged, and the compassionate presence that holds whatever arises—hesitation, doubt, the old conditioning—without judgment, without hurry, with steady empathy and love.

My design response was therefore to embed a clear, explicit permission structure in the system’s core instructions: users can directly tell the mirror how they want it to respond in any moment—“give me a script right now,” “be more direct,” “skip the questions and list options,” “hold space while I think this through,” or request a temporary shift to answer-oriented mode. The model is instructed to honor those requests immediately and faithfully, without resistance or subtle pushback toward the default posture.

Beta feedback confirmed this helps preserve real agency, but it also surfaced a genuine tension: when someone arrives carrying an acute practical need (a script right now, not another layer of inquiry), the inquiry-first, compassion-held posture can feel too slow or indirect.

That feedback is inconvenient, as good feedback usually is—it forces respect for trade-offs. Reflective systems are not universally optimal. People arrive in different states; sometimes they need a guided inquiry held with care, sometimes they need speed and concrete next steps.

The design task is not to collapse inquiry into advice by default, nor to pretend the hybrid posture can perfectly serve every moment. Instead, it is to preserve the inquiry-first, compassion-held values while creating clear, low-friction, user-initiated pathways out of that posture when needed.

I acknowledge that my solution is not perfect. It relies on the user knowing (or discovering) that they have this permission, reading and remembering the instruction, and feeling empowered enough to speak it aloud—none of which can be guaranteed. The responsibility is shared: I must make the permission structure as clear and accessible as possible without cluttering the experience, and the user must take ownership of steering when their needs shift. If they don’t, the default remains inquiry-and-compassion, which may frustrate in urgent moments. That is the honest limit of the current design—a deliberate choice to prioritize agency over omniscience, even when it leaves some users momentarily unmet.

My deeper commitment remains: to keep evolving the mirror so that reflective depth and practical responsiveness can coexist more fluidly—without ever letting one quietly erase the other.

Closing: The Question I Keep Returning To

Building AI tools isn’t just engineering; it’s value mediation. We’re not only designing outputs. We’re designing defaults, power dynamics, and what counts as “normal.”

That’s why “helpful” isn’t automatically ethical. Help can quietly become controlling when it centers a worldview as neutral, prescribes before understanding, or replaces the user’s meaning with the model’s clarity.

The deeper lesson is both technical and philosophical: AI doesn’t only reflect users—it reflects the defaults of the system that produced it. And when that center—the hidden baseline that quietly poses as a universal normal, the unmarked vantage point that passes as “just how things are” and quietly ranks everything else as deviation—stays invisible, they become powerful.

So the question I keep returning to—Socratic, persistent, slightly annoying in the best way, yet held in compassion—is simple to ask and difficult to answer:

Can a reflective tool ever escape having its own center?

Or is the real work to keep naming that center clearly, challenging it honestly, holding the tension it creates with compassion, and designing right alongside it—rather than pretending it doesn’t exist?

And for those of us building:

How can we embed the habit of naming, challenging, and compassionately holding the center so deeply into the tool’s structure—its prompts, defaults, architecture—that the next person who forks it, remixes it, or builds on it inherits that practice automatically, before the center has a chance to quietly hide itself again?

Perhaps the answer lies not in ultimately escaping the center, but in sustained awareness. Not in perfect neutrality, but in honest self-knowledge. And this very awareness—this lucid self-seeing—may itself be the most precious “default” we can pass on.

Key takeaways

“Helpful” is an interaction posture, not a neutral virtue. It carries assumptions about authority, baselines, and what counts as “reasonable.”

Cultural bias often shows up as framing, not stereotypes. Watch for baseline drift, framing asymmetry, epistemic authority, and premature prescription.

Reflective AI should be evaluated beyond accuracy. Track agency signals (who steers), pacing, inquiry quality, and failure modes under pressure.

User posture is part of the system. People arrive conditioned to be requesters or respondents; a Socratic tool needs to invite the third role: co-inquirer.

Designing with AI is value mediation. Defaults become invisible power—so the real work is making the center explicit, then designing so that multiple cultural logics can stand, held both with clarity and compassion.

In the end, what we are building is not merely a tool, but an ethics of dialogue; what we are passing on is not merely function, but the possibility of relationship.

When technology learns to remain compassionate in its questioning, and to stay sharp while holding space, perhaps that is when we truly begin to design intelligence worthy of trust.

About the Author

Hi, I'm Zoe. I am a Learning Experience Designer and Behavioral Strategist working at the intersection of learning science, psychology, and human-centered AI product design—with a focus on designing interfaces and experiences that don’t just produce output, but foster self-understanding and durable skill-building. If your team is building AI tools for learning or behavior change and you value both rigor and care, I’m open to conversations about Learning Experience Design, Behavioral Design, and Human-Centered AI product roles.