You Should Care About the Illusion of Technical Grace: AI Makes You Look Skilled Before You Are

A field guide to staying honest in the age of instant polish

A friend recently shared a caution that has settled deeply into my bones:

“In this era of AI, we must be careful not to fool ourselves.”

AI can generate the surface of mastery fast. The real work is keeping our self-assessment honest—and designing tools and learning experiences that build durable capability, not just polished output.

We are living through a period of profound historical compression. The distance between having an idea and seeing a professional result has effectively vanished. We can now prompt a vision into existence in seconds. It is, by all accounts, a miracle of efficiency.

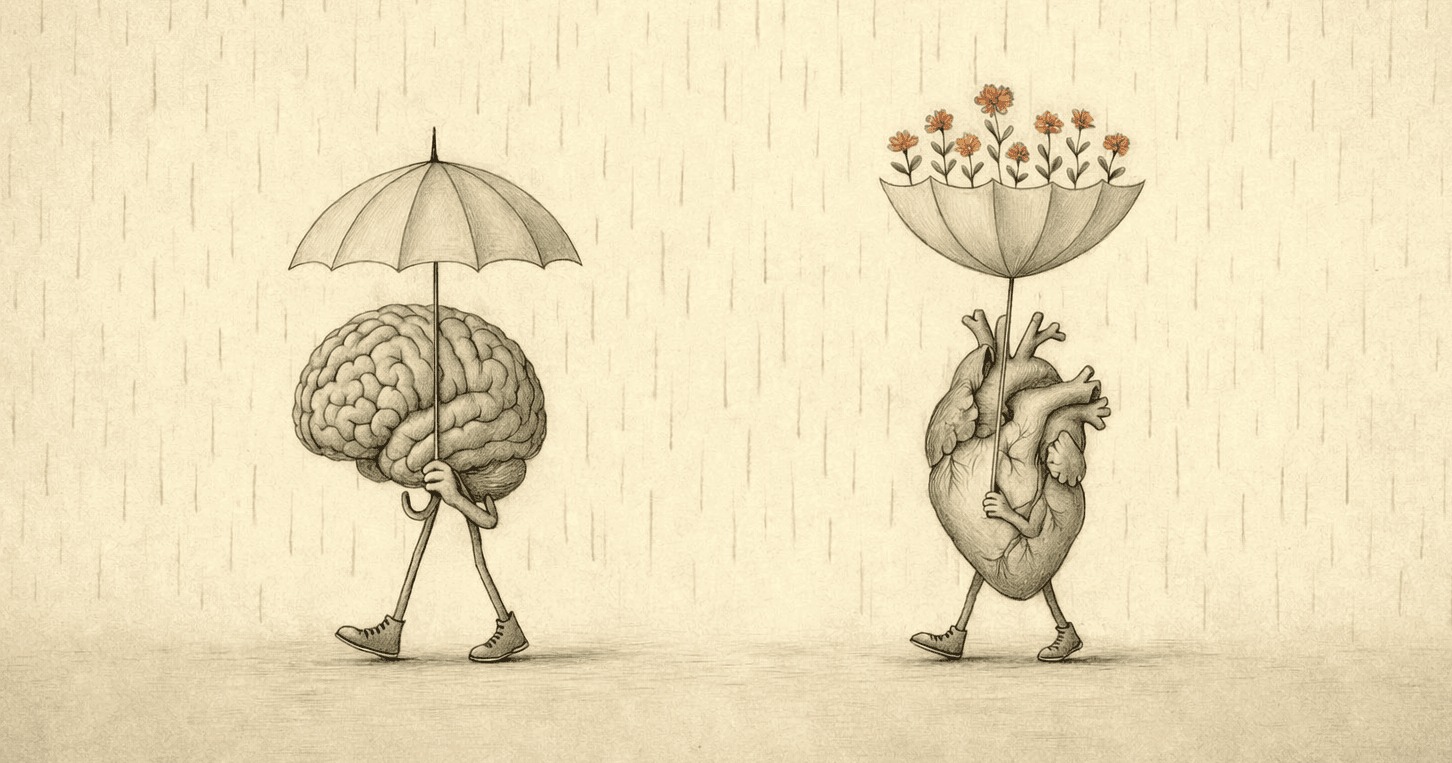

But as a practitioner of learning experience design and a student of the human mind, I also see a shadow accompanying this light. It is a phenomenon I have come to call Technical Grace.

Technical Grace is the ability to produce work that carries the aesthetic markers of mastery—without the practitioner having endured the long, transformative labor of the craft.

It is not a form of cheating, nor is it something to be feared. It is simply a tool doing what tools do: extending our reach.

And yet, it carries a quiet danger: a subtle, persistent illusion that we know more than we truly know, and that we are capable of more than we could ever sustain alone.

When the Outcome Mimics Mastery

AI allows us to manifest outcomes that look like the work of a seasoned expert. That's part of its power and part of its seduction.

In this new landscape, we are invited to be more rigorous in our self-examination: Does the outcome represent a growth in our own capability, or merely a growth in our access?

I do not say this to diminish the thrill of creation. Feeling capable is a fundamental human need; Self-efficacy fuels momentum. For many of us, AI is the bridge that finally allows us to cross the gap from silence to expression.

But there’s a hidden risk we should name clearly:

When we use AI to bridge the gap between our current skill and a professional output, we risk mistaking the tool’s processing power for our own cognitive development.

The Mirage of Competence

In developmental psychology, we speak of desirable difficulty: real growth requires friction. We expand our capacity by struggling with the medium—the stubborn code, the elusive sentence, the complex design.

When that struggle is replaced by a seamless prompt, a gentle question becomes necessary for our own growth: Is my "self" actually expanding—or am I simply standing on a taller pedestal?

In the professional world, true capability is not just the artifact we produce. It is our ability to explain the "why" under pressure, to pivot when requirements shift, and to troubleshoot the engine when it breaks. Polish has become a commodity; deep understanding remains a rarity.

Humility: The Billion-Year-Old Cliff

This AI era is a relentless tide, urging us to "ship" faster, louder, and more frequently. And the waves aren't just technical; they're also emotional.

The waves are comparison. The waves are watching others ship products faster. The waves are seeing a polished AI project, and feeling that familiar tightening in the chest—the fear of being left behind. In these moments, humility is no longer just a virtue. It becomes a survival strategy for the soul.

To me, humility feels like a cliff of billion-year-old rock. It is unmoving, untouched by the spray, and entirely unfragile. It is the solid ground that allows me to stand firm while the waves of the world rush past.

Humility does not mean shrinking. It means standing in truth. And it invites us to:

Respect the Effort: Acknowledge that expertise takes time, and there are no shortcuts to wisdom.

Evaluate the Skill: Be precise about where our contribution ends and the machine’s begins.

Acknowledge the Insecurity: Feel the "tightness" of comparison, but refuse to let it steer the ship.

Returning to the Cornerstone

The antidote to the "AI illusion" is not to retreat from technology. That would be like refusing to use a telescope while trying to map the stars.

The key is to evolve our relationship with the tool. I encourage us to transition from Ego-driven creation—the natural urge to use AI as a mask for our uncertainty—toward a more intentional, human-centered approach.

While AI provides the Technical Grace, our humanity provides the qualities that a machine cannot be synthesized at scale:

Discernment: The ability to know not just how to build something, but why it should exist at all.

Ethical Responsibility: The courage to own the consequences of our output.

Lived Experience: The "blood, sweat, and tears" of past failures that inform our current intuition.

Care: The radical, often inconvenient act of truly giving a damn about the human on the other end of our work.

In an era of infinite, synthetic abundance, these traits are the human frontiers that cannot be automated. They are the only things with enough weight to truly resonate through the noise.

Tools will inevitably change, but this collection of human virtues remains the unmoving cornerstone of every meaningful life—and every career built to endure.

A Ritual for the Honest Builder

When I build with AI, I have integrated a small practice to keep my feet on the ground. I ask myself three questions:

What was mine? (My framing, my taste, my critical decisions).

What was the tool’s? (The speed, the polish, the infinite variations).

What will I earn next? (The one skill or layer of understanding I will practice without assistance today).

This is honest growth: turning borrowed capability into earned skill and turning truth into freedom to learn.

A Closing Question for You:

When has AI made you feel more capable than you are—and what practices help you return to what is real?

About the Author

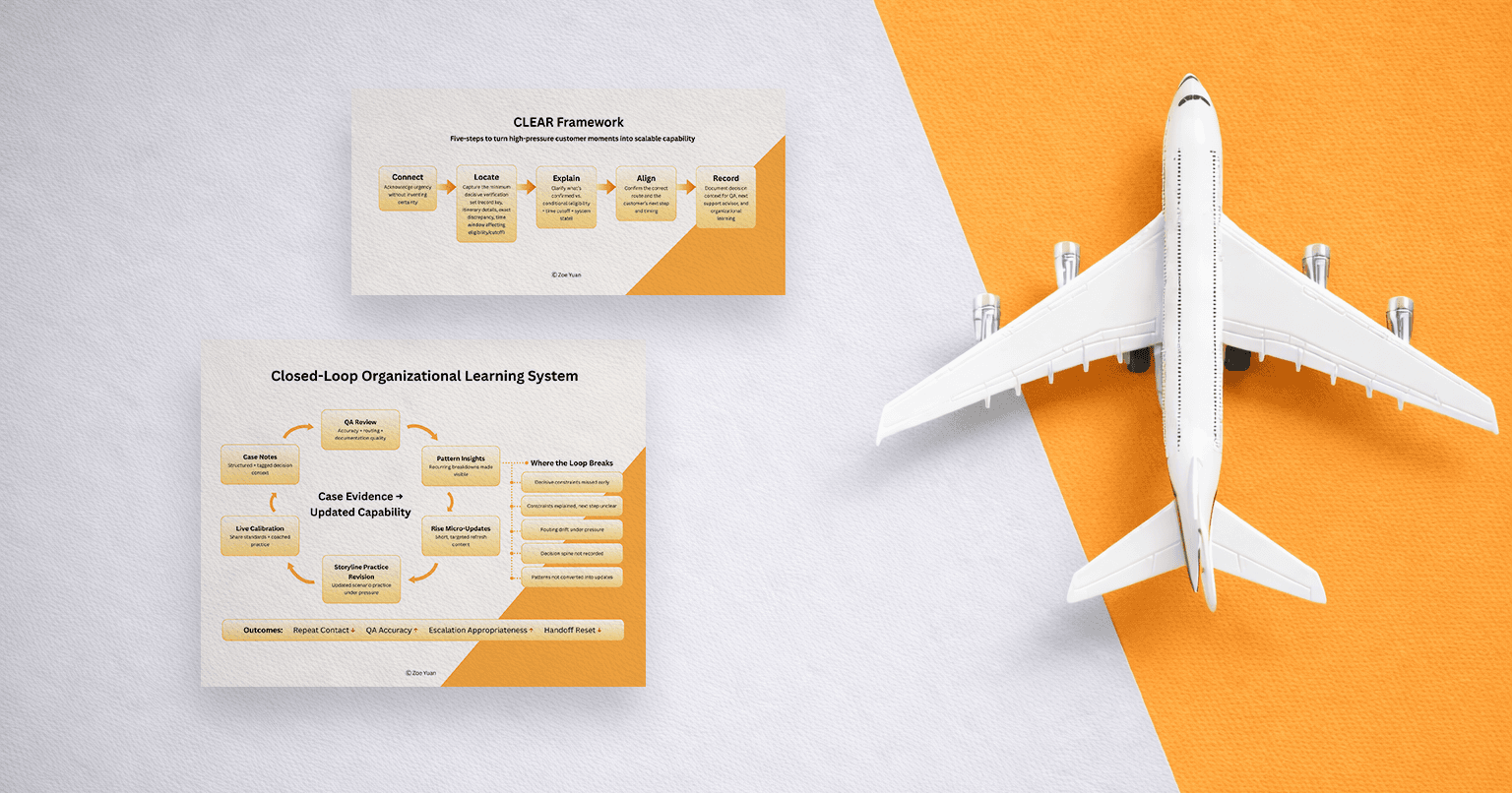

Hi, I'm Zoe. I am a Learning Experience Designer and Behavioral Strategist working at the intersection of learning science, psychology, and human-centered AI product design—with a focus on designing interfaces and experiences that don’t just produce output, but foster self-understanding and durable skill-building. If your team is building AI tools for learning or behavior change and you value both rigor and care, I’m open to conversations about Learning Experience Design, Behavioral Design, and Human-Centered AI product roles.