Why Thinking Like a Psychologist Is the Essential Skill for the AI Era

An Evidence-to-Meaning Framework for discernment, justification, and context-sensitive meaning-making

A Quick Map of This Essay

AI makes fluent output abundant. It does not make truth, understanding, or wise judgment automatic. This essay argues that what humans need now is thinking like a psychologist—a way of reasoning that stays both method-honest and human.

For me, this kind of thinking isn’t a trait. It’s a practice—one I organize as a path from evidence to meaning, built on three capacities:

Discernment: tell the difference between a claim that sounds right and one that evidence and method actually support.

Justification: make thinking accountable—write conclusions with clear limits, no more than the method allows.

Context-Sensitive Meaning-Making: interpret evidence inside real lives, real pressure, and real cultural contexts—including when AI “fills in” context that it doesn’t actually understand (e.g., treating “success” as individual self-actualization when a student’s reality is deeply shaped by family duty and collectivist norms).

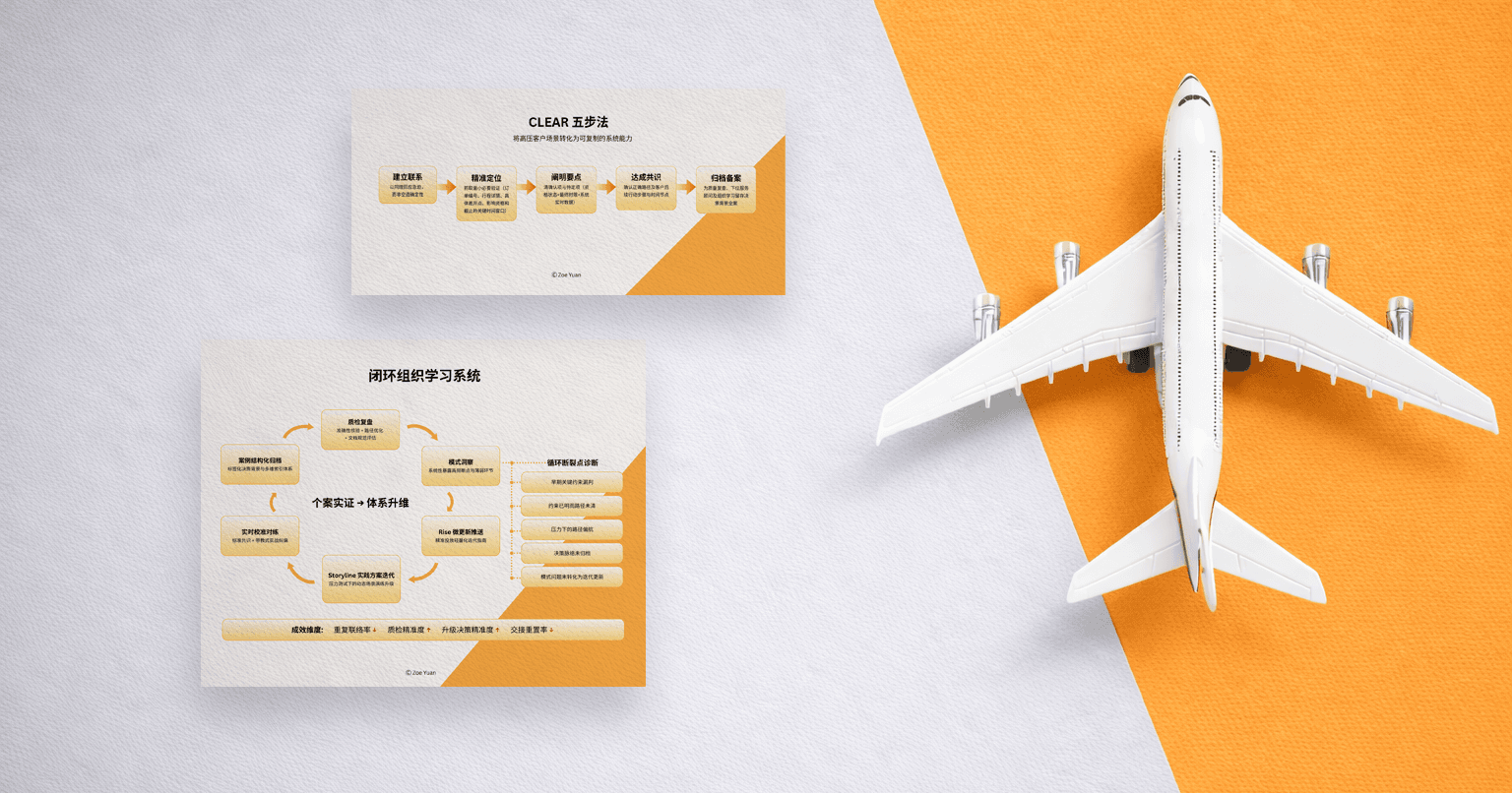

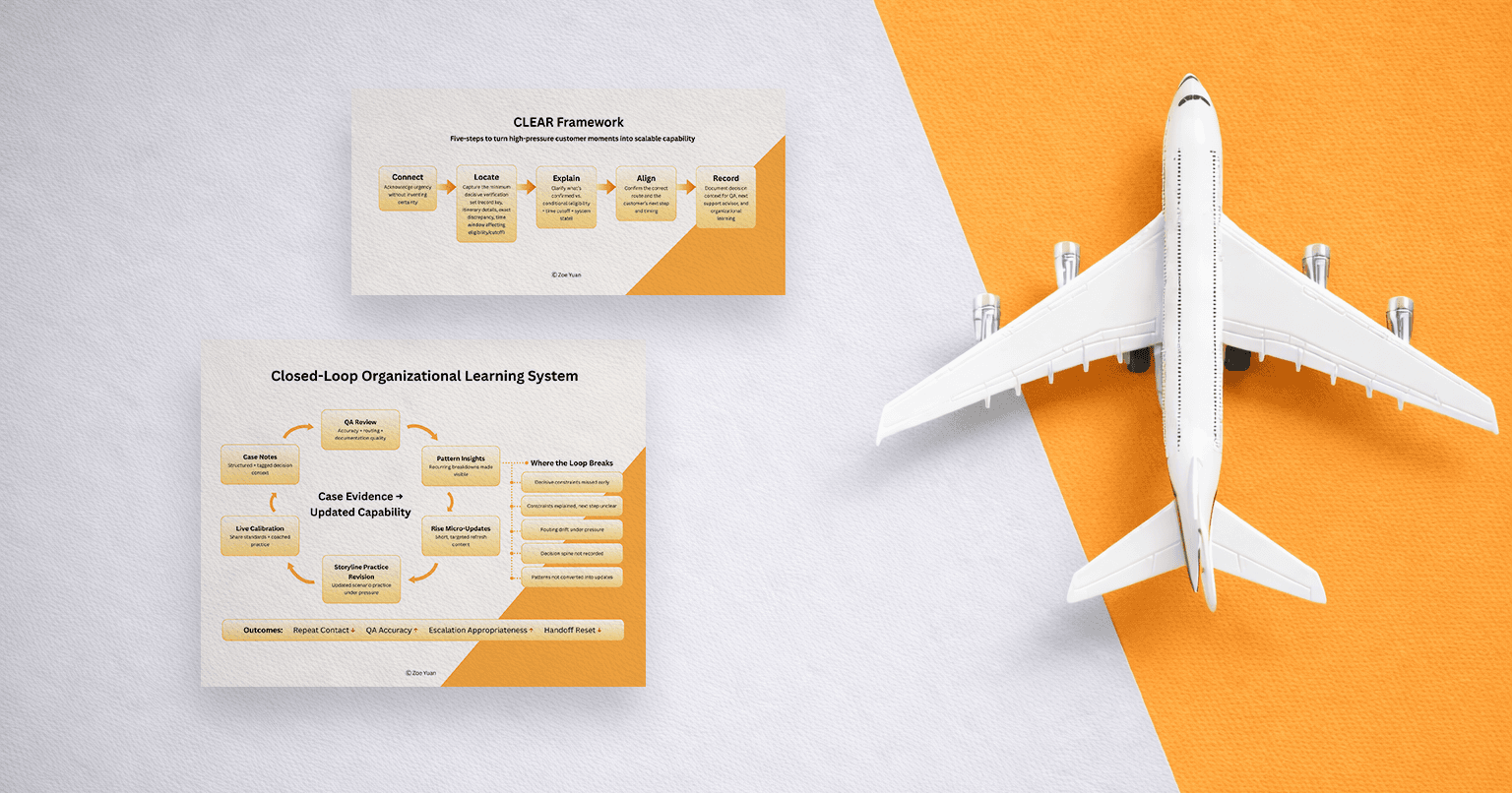

I outline a constructivist approach for teaching Cambridge Psychology (IGCSE/A Level) bilingually (English/Chinese), and I include one concrete lesson design (“87% Agree”) to show how these habits can be built under exam conditions and transferred to the world students actually live in—especially algorithmic content on social media and AI-generated answers.

The Gap Between Output and Meaning-Making

I once earned an A+ in a statistics course. The grade felt like an acknowledgement—I worked hard for it. And still, walking out of the final, I carried a quieter truth: I wasn’t sure what I could do with those skills outside the class environment.

If you asked me what my results justified—what I could conclude, and what I couldn’t—I could do that. I had to. That was the language the course taught.

What I couldn’t do was the other part: if you asked me what those numbers meant in human terms—what story they were allowed to tell, and what they were flattening into abstraction—I didn’t have words for it. I only felt a subtle emptiness, a disconnection—like I did everything right, and yet something wasn’t right.

That was pre-AI, which is why I don’t see AI as the beginning of the problem. AI simply makes this kind of gap impossible to ignore. When output becomes effortless, education can’t hide behind fluency anymore. We’re forced to ask what learning actually is—and what kind of thinking still holds when sounding right is cheap

So what do humans need to learn now that AI can do so much?

My answer: thinking like a psychologist—using scientific reasoning to evaluate claims, weigh evidence, and draw justified conclusions under uncertainty, while staying grounded in what those conclusions mean for real human lives.

Here’s the problem I’m trying to solve: education can train people to do pure scientific output—clean analysis, correct procedures, justified conclusions—while leaving them disconnected from what those conclusions mean and when they should be trusted. In the AI era, that separation becomes dangerous because fluent output is abundant while understanding is scarce.

Psychological understanding, at its best, is both scientific thinking and meaning-making—rigor plus care for the human condition. When those two are separated, students can look competent on paper and still feel empty inside. When they’re integrated, students gain something sturdier: they can think clearly and stay human.

This is the Evidence-to-Meaning Framework I’m actively developing for teaching Psychology under external exam conditions (IGCSE/A Level) through bilingual instruction (English & Chinese). It’s grounded in constructivism and built for a reality we can’t avoid: the AI era makes fluent output abundant, but it doesn’t guarantee truth, understanding, or transferable judgment.

Why “Critical Thinking” Alone Isn’t Enough

When people talk about essential human skills, they often say “critical thinking.” I agree—critical thinking is necessary. But in the AI era, it’s also not specific enough. The problem isn’t only that people fail to think. It’s that they confuse fluency with truth, and confidence with credibility.

Part of the confusion is that “critical thinking” holds different traditions.

Humanities-style critical thinking emphasizes human sense-making: holding multiple perspectives, noticing language and power, and asking what a claim means for real people.

Psychological thinking doesn’t compete with that. It integrates it—then adds empirical accountability: it still asks, What does this mean for the human in front of me? But it also asks a gatekeeping question that prevents confident storytelling from becoming “truth”: What does the evidence justify?

In the AI era, that constraint matters because AI can generate persuasive interpretations quickly and even cite sources. But it doesn’t reliably hold itself to methodological limits—especially when evidence is weak, context is missing, or claims are overstated.

So the skill I care about isn’t “critical thinking” as a slogan. It’s a form of thinking that moves from evidence to meaning responsibly—through discernment, justification, and context-sensitive meaning-making.

The Constructivist Foundation

Here’s the educational psychology belief underneath everything I’m proposing:

Knowledge is constructed, not transferred.

When instruction is detached from lived experience, students can borrow the language without owning the insight. They can perform the moves, but the understanding doesn’t stick. And when assessment rewards output more than reasoning, the system trains fluency—whether the fluency comes from memory, coaching, or AI.

Constructivism is the idea that understanding is built, not poured in. Students begin with what they already assume, meet evidence that challenges it, and then reconstruct their thinking through dialogue, feedback, and revision. That’s why my lessons are designed to create cognitive conflict without shame: because conflict is what makes the concept stick, and safety is what makes students honest enough to learn.

The Evidence-to-Meaning Framework

I call this the Evidence-to-Meaning Framework because it treats learning as a path: from what looks true, to what can be justified, to what it means in real human conditions.

+ Discernment

Separating what feels persuasive from what evidence and method support.

Question: What counts as evidence here?

+ Justification

Making reasoning visible and disciplined—stating what the evidence allows, what it doesn’t, and why.

Question: What can I conclude—and what can I not conclude?

+ Context-Sensitive Meaning-Making

Noticing how pressure, incentives, language, and culture shape what people report, learn, and “agree” with—and translating evidence into careful human conclusions without flattening nuance.

Question: What does this mean for real people in this context?

Memorizing concepts in learning is necessary. It just isn’t sufficient. What matters is whether students can use concepts to discern, justify, and make meaning responsibly—especially when fluency, numbers, or trends try to masquerade as truth.

Why design for IGCSE and A Level at all? Because psychological thinking can be learned at a younger age, and it matters earlier than we tend to admit. I learned psychology late, after years of mistaking performance for understanding. Sometimes I wish I had met these ideas earlier, when my sense of self was still forming, and school pressure still felt like gravity. In the AI era, timing matters even more.

What Cambridge Writing Is Actually Testing (and why that shapes my design)

Cambridge doesn’t only test whether students “know Psychology.” It tests whether they can use psychological knowledge in disciplined ways.

At IGCSE, assessment objectives emphasize (1) knowledge of terminology/concepts/methods, (2) applying psychology to scenarios, and (3) analysis/evaluation, such as reaching conclusions from data and evaluating research methods (validity, reliability, ethics). And the command words themselves tell you what writing must do: explain requires reasons supported by relevant evidence; justify explicitly requires evidence/argument; suggest requires applying knowledge to propose valid responses.

At A Level, Cambridge is explicit about the three-part writing demand: AO1 (knowledge/understanding), AO2 (application to scenarios, developing arguments), AO3 (analysis/evaluation, strengths/weaknesses, reasoned conclusions from evidence). The paper format makes this concrete: Paper 3 includes a structured essay with a 6-mark “describe” section and a 10-mark “evaluate” section.

So when I say I teach “discernment” and “justification,” I’m not importing extra ideology into an exam course. I’m aligning students’ habits with what Cambridge writing already demands: accurate description, defensible inference, and bounded evaluation—then adding the third layer Cambridge doesn’t name explicitly but students desperately need in real life: meaning-making that stays context-aware.

Teaching Cambridge Psychology in China and Why Bilingual Matters

Because IGCSE and A Level Psychology sit inside a Cambridge (British) curriculum, the course isn’t only content—it carries assumptions: what counts as “healthy,” how emotion is talked about, what evidence is trusted, and how the individual is positioned inside society. Teaching it in China means I can’t treat the syllabus as culturally neutral.

That’s where bilingual teaching becomes part of the Evidence-to-Meaning Framework. Language shapes what students can notice (discernment), what they can defend on the page (justification), and what feels safe to admit in a classroom (context-sensitive meaning-making).

A simple example: I introduce self-esteem in Chinese first—not as a definition, but as a local frame:

“Self-esteem 在中文语境下也可以表达——你是不是对自己(的能力)有足够的信任和尊重?”

“Self-esteem, in a Chinese context, can also be expressed as: Do you have enough trust in—and respect for—yourself (and your abilities)?”

Students do a 60-second anonymous quick-write about when they feel least confident in class (often: 不敢举手 afraid to raise my hands、怕出错 afraid of making mistakes、怕丢脸 afraid of losing face). We read a few lines neutrally—not to analyze anyone, but to notice what this concept touches here.

Then we switch into English: the Cambridge term, the official definition, and how it’s measured. This is where discernment becomes visible—separating the construct from the measure, and noticing what a measurement might capture, while meaning doesn’t always travel cleanly. Finally, students return to English exam mode for justification: one limitation and one improvement (IGCSE), or deeper evaluation (A Level). The goal isn’t translation. It’s bi-contextual understanding.

Proof of Concept: The “87% Agree” Lesson (Prosocial Behaviour + Research Methods)

Up to now I’ve been talking at the level of framework. Here’s what it looks like when the ideas have to survive a real classroom: time pressure, external marking standards, and teenagers who are deciding—consciously or not—whether they can trust their own thinking.

To demonstrate the design spine, I’ll use one lesson as an example. This isn’t a “one-size-fits-all” template or the only lesson I would teach. It’s a single case showing how the Evidence-to-Meaning routine can scale in depth across IGCSE and A Level under Cambridge-style external assessment.

When I design for both levels, I keep the reasoning pattern consistent, but I raise the bar on depth and precision. IGCSE focuses on clean identification and one-step evaluation; A Level demands tighter justification—alternative explanations, methodological critique, and disciplined limits on conclusions. Same backbone, higher bar.

Step 1: Start with a claim that feels like a truth

I display:

“If most people agree on something, it’s probably true.”

Then add: “87% agree.”

My design decision (Discernment): I use a number on purpose because students—and adults—often treat quantity as a shortcut to truth. The point is not to catch them. It’s to let them notice the moment a claim feels earned before we’ve asked what it measures.

Step 2: Protect honesty before we test judgment

Before students respond, I say:

“This isn’t a personality test. We’re studying how pressure affects judgment.”

My design decision (Context-sensitive meaning-making): In a high-pressure classroom culture, students will perform if they feel evaluated as people. Performance distorts the data. Psychological safety isn’t “extra”—it’s a condition for valid observation.

Step 3: Run a micro-experiment that makes pressure visible

We run a fast experiment, using a multi-media tool to run an in-class survey:

Public condition: students believe their rating appears next to their name on the projected screen

Private condition: only I see their response

Everyone rates the statement on a scale of 1–7. Five minutes. No discussion.

My design decision (Discernment → Meaning): The public/private split externalizes something students already live with: how social exposure changes what feels sayable.

Step 4: Force the pivot from feeling to method

Immediately after, we pivot:

“What evidence would make this statement true?”

Most students point to “87%.” Perfect. That’s the real starting point.

My design decision (Discernment): I don’t try to erase the instinct. I discipline it. “87%” becomes the doorway into a better question: 87% of what, measured how, compared to what?

Then I give them tools that match Cambridge writing moves—separating claim, evidence, and method, then naming limits:

Claim: what’s being asserted?

Evidence: what observation supports it?

Method: how was it measured?

Inference: what links evidence to a claim?

Limits: what can’t we conclude?

Step 5: Make justification visible on the page

I give them a one-page study summary (exam format) of what we just did.

Task: Evaluate validity.

Does a 1–7 rating measure truth judgment, or self-presentation?

If public ratings increase, did belief change—or caution increase?

What confounds exist? What alternative explanations still fit?

What would improve the design?

My design decision (Justification): Cambridge writing is allergic to vague confidence. It rewards bounded conclusions—especially when students can justify with evidence and method. So students practice the core move: write what the method allows—no more, no less.

Step 6: Different levels, different demand (same backbone, higher bar)

Students write under time pressure—but the bar changes by level.

IGCSE: one clear validity issue + one improvement, in plain exam language.

A Level: add one constraint—name one alternative explanation the design can’t rule out, and tighten the conclusion so it doesn’t overreach.

My design decision (Justification): The difference between levels isn’t topic—it’s precision. And Cambridge explicitly expects that precision at A Level through describe/evaluate essay structure and higher evaluation demand.

Step 7: Construction happens through peer friction + public learning

Then students swap in pairs.

IGCSE peer task: underline the claim, circle the evidence, write one question that tests the limit of the conclusion.

A Level peer task: do the same, then add one sentence: “If this alternative explanation were true, what would we see instead?”

We bring it back to the room. I don’t ask for “the right answer.” I ask for better reasoning—and the prompt changes by level.

IGCSE public share: Where did your partner’s reasoning outrun the method? Where did they draw the boundary well? What did they assume without evidence?

A Level public share: What alternative explanation still fits? What confound is most plausible here? If you had one change to the design, what would most increase validity—and why? What is the most honest conclusion you can write in one sentence?

Then we share anonymized excerpts and explain what happened: where the reasoning stayed within the evidence and where it overreached—so students learn in public while individual scores remain private.

My design decision (Context-sensitive meaning-making): We finish with a one-line exit ticket: “Where does social proof show up in your life (online, in school, or in AI answers), and what’s the first question you’ll ask to test it?” That’s the bridge from method to habit.

Why This Matters in the AI Era Without Moral Panic

One day, I was using AI to brainstorm ideas when it gave me a sentence that sounded like a finished thought:

“Most corporate training fails not because people aren’t motivated, but because…”

I didn’t even read the second half. The word “most” stopped me.

“Most” is a claim dressed as a shortcut. It quietly demands a denominator: Most of what? Across which trainings? Measured how? Over what time horizon? And I’ve noticed AI loves this pattern—clean generalizations that feel true because they’re well-crafted.

When I asked for sources, the tool got even more persuasive. It added nuanced language and cited studies. It sounded careful. But when I clicked into what it referenced, I immediately felt the gap: the numbers reported and the claim made weren’t aligned. AI was drawing causal conclusions that the study design couldn’t justify. The citations weren’t proof—they were a costume for certainty.

And this isn’t only about school essays. For most teenagers, belief is increasingly formed inside algorithmic content—short, confident claims optimized for attention, repeated until they feel like common sense. In that environment, the risk isn’t just misinformation. It’s losing the habit of knowing why you believe what you believe.

That’s why I teach psychological thinking as an Evidence-to-Meaning practice: discernment to separate claim from evidence, justification to write only what the method allows, and context-sensitive meaning-making to catch what a claim quietly assumes about “a good life,” “a successful student,” or “healthy development” in the first place—especially when AI fills in those assumptions with a culturally default story.

And the outcome I’m aiming for is not that students become cynical or hyper-skeptical. It’s that they can say something simple, with a steadier voice:

Now I can be more confident in how I evaluate: what to question, what to test, what I can—and can’t—conclude, and what’s actually worth drawing meaning from.

AI isn’t creating new weaknesses. It’s magnifying old ones. Which means the solution isn’t to ban tools—it’s to strengthen the human practice beneath them.

The Larger Point

The question “What should we teach in the AI era?” is often framed as a curriculum question. I think it’s a human question: when language becomes effortless, it becomes easier to confuse sounding right with being right—and even easier to confuse being right with being okay.

That’s why I don’t view Psychology as content students “cover.” I see it as a way of keeping their footing.

When students build discernment, they learn to distinguish between persuasion and proof—between social proof and evidence, between a clean sentence and a supported claim. But discernment alone is still internal. It can stay private, instinctive, even fragile.

That’s why justification matters. Justification is where thinking becomes accountable. It’s the discipline of putting your reasoning on the page with honest limits: Here is what the method supports. Here is what it does not. Here is why. In a Cambridge-style external assessment culture, that skill is exam-relevant—because command words like justify explicitly require evidence and argument. In an AI culture, it’s life-relevant—because fluency no longer signals understanding.

And then there’s the layer of education often skipped: context-sensitive meaning-making. Evidence doesn’t float above human life. It lands in particular bodies, families, languages, and cultures. Teaching a Cambridge syllabus in China makes this visible: the same concept can carry different social risks, different moral weights, and different assumptions about self, responsibility, and “success.” If students can’t move between contexts, they can justify an answer and still miss what the knowledge is for.

This is why I treat Psychology as the training ground, not the finish line. Although I’m illustrating this Evidence-to-Meaning Framework through Cambridge Psychology, it isn’t limited to Psychology. The Evidence-to-Meaning practice applies anywhere students face confident claims—AI answers, headlines, “study says” posts, even everyday arguments with friends. Once students learn to discern what’s being claimed, justify what’s actually supported, and make meaning without overreaching, they can carry that habit into any subject—and into the choices they make outside school.

So yes—students must memorize. And yes—students must meet external marking standards. But memorization and performance are not the end. They are the surface. Underneath, the deeper outcome is whether a student can do three things under pressure: discern what is being claimed, justify what is actually supported, and make meaning without turning evidence into a story it didn’t earn.

That’s the standard I’m building toward: a classroom where rigor doesn’t make students cold, and meaning-making doesn’t make them sloppy. In the AI era, output will continue to get cheaper. What’s still in our hands is whether students keep their footing—whether they leave school with borrowed sentences, or with a mind that can verify, a voice that can justify, and a way of thinking that stays human while the tools get more powerful.

That’s the work. And it has just begun.

About the Author

Hi, I'm Zoe. I am a Learning Experience Designer and Behavioral Strategist working at the intersection of learning science, psychology, and human-centered AI product design—with a focus on designing interfaces and experiences that don’t just produce output, but foster self-understanding and durable skill-building. If your team is building AI tools for learning or behavior change and you value both rigor and care, I’m open to conversations about Learning Experience Design, Behavioral Design, and Human-Centered AI product roles.