From Self-Awareness to Creative Authorship: A Psychological Framework for Building AI Literacy

Why AI programs needs to teach agency, not just prompts

In today’s world, AI literacy is usually taught as a technical skill: how to write better prompts, iterate faster, and optimize outputs. But the deeper question is more psychological than technical. Before someone can “use AI well,” they need to understand something about themselves—how they think, what they value, and what they’re trying to create in the first place. Otherwise, AI becomes the hidden author of their decisions. They may look competent on the surface, producing polished results quickly, yet struggle to explain the logic behind them or notice when the output conflicts with their lived experience.

That’s the core argument of this essay: AI literacy requires a psychological foundation before technical training. When learners develop self-awareness first, they can direct AI rather than be directed by it. What follows is a framework I’ve been developing through co-teaching creative AI curriculum at NYU Shanghai and designing reflective AI tools. It’s built at the intersection of art, psychology, and learning design, and it’s anchored in a simple belief: agency is not a feature you add later—it’s the foundation you build first.

How I Came to AI Literacy Through Art

I came to AI literacy from an unusual direction. My earliest training was in art, where the real education has never been just about “making something look good.” In practice, aesthetic execution might be 30% of the work. The other 70% is research, thinking, exploration, discernment, and the inner process of learning what I actually want to express. Art trained me to live inside questions, tolerate ambiguity, and build a relationship with taste—because without taste, creation collapses into repetition.

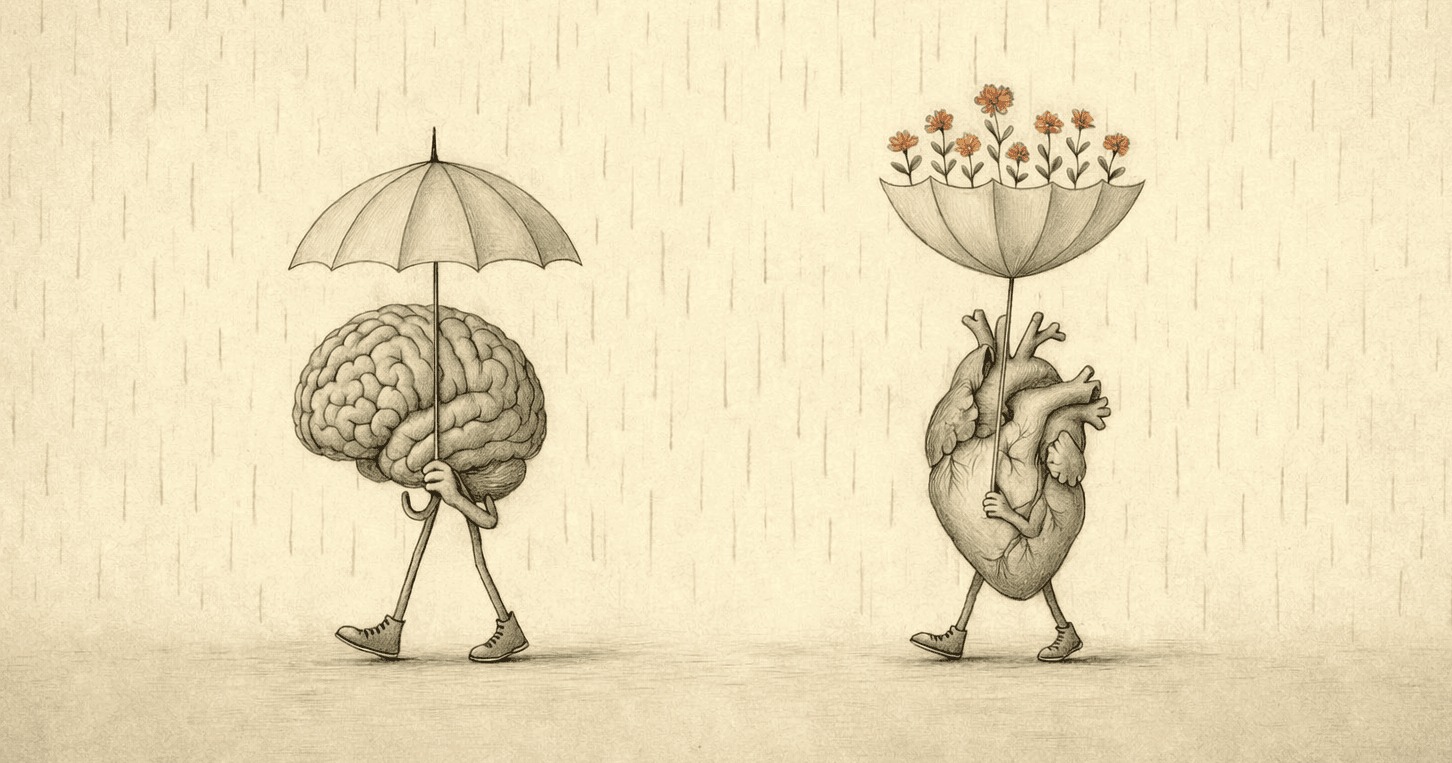

AI can automate much of that 30%—the visible layer of execution. If the primary goal is simply to produce a deliverable, that can feel like the entire job. AI can generate imagery, produce drafts, propose frameworks, and simulate stylistic coherence at scale. But the part that makes any work worth doing—the part that gives it direction and integrity—still lives in the remaining 70%. Meaning-making cannot be outsourced. Judgment cannot be automated. Neither can the slow work of clarifying what matters and why.

This is why, as execution gets cheaper, the scarce resource isn’t output. It’s authorship—not authorship as “content,” but authorship as a human capacity formed through lived experience: the accumulation of perception, mistakes, refinement, and growth. It isn’t a style or a format. It’s the ability to stand behind a choice, to hold a point of view, and to mean it.

And this is where many AI literacy programs quietly fall short. They teach people how to operate the tool—how to prompt, iterate, and polish—without strengthening the inner skills that keep the tool in its place. Technical fluency can produce impressive results, but without self-awareness, it also increases the risk of drift: outputs that sound right, look right, and still fail to reflect what is actually understood, valued, or intended. Real literacy, then, has to begin before prompts. It begins with self-knowledge—learning what matters, why it matters, and for whom the work is being made.

The Competence–Agency Gap: When Fluency Becomes a Trap

Many AI courses teach technical fluency and assume agency will naturally follow. But fluency without psychological grounding creates a subtle, dangerous gap. Learners become faster at execution while becoming less practiced at asking the harder questions: Does this reflect reality? Does it reflect what I value? Is it actually what I mean? They learn to refine outputs, but not to challenge the assumptions inside them. They get skilled at polishing what the machine offers—yet undertrained in overriding it.

A seductive workflow reinforces this: receive a task, ask AI for a solution, refine the output, submit. It’s smooth. It looks professional. It feels like competence. But later, when someone asks for the reasoning—why this solution fits, what trade-offs it carries, what it depends on—people often discover they’re repeating machine-shaped logic. They can produce. They can’t always stand behind what they produced. That’s the competence–agency gap: appearing capable while quietly outsourcing judgment.

Here’s what usually goes unnamed: agency isn’t only the ability to notice when something feels off. It’s the ability to say—plainly—what you know, what you don’t know, and what you’re assuming. That’s what I mean by justification. Not academic justification—human justification: Why do I believe this? What am I basing it on? What would change my mind? Without that habit, AI fluency becomes a confidence costume. With it, the tool stays useful—but it can’t quietly become your reasoning.

And the risk is amplified by how AI fails. AI rarely feels “wrong” in a way that alarms you. It feels safe. Coherent. Neatly packaged. It delivers what I call technical grace: work that carries the aesthetic markers of mastery—without the practitioner having endured the long, transformative labor of the craft. In practice, it sounds polished enough to pass, yet generic enough to slide past the truth of a specific context.

So the real question isn’t “Is this good?” It’s: Do I actually know this is true—or do I just like how it sounds?Without the metacognitive habit to notice that gap—and the courage to pause inside it—learners don’t become authors. They become fluent executors.

A Moment of Dissonance at NYU Shanghai

I saw this dynamic most clearly while co-teaching a creative learning design course at NYU Shanghai for an international student cohort. The instructional usage of AI in this course was designed around a different question than “how do we get better outputs?” Instead, we asked: how do we help learners stay in the driver’s seat? If AI is going to be a permanent part of their creative and professional landscape, the goal isn’t just proficiency with tools. The goal is psychological sovereignty.

We structured the course in a three-phase progression, treating the learner’s internal state as a prerequisite for using the tool. In Phase 1, we worked on the psychological foundation before introducing any AI tools. I integrated CASEL’s social-emotional competencies through reflective journaling and structured dialogue, enabling students to clarify their values, lived experiences, and communicative intent. When a learner knows what they want to say, AI becomes directional. Without that, AI becomes suggestive—and suggestions become decisions.

In Phase 2, we intentionally introduced what I call productive friction. Students created a collage using AI-generated imagery alongside physical materials such as magazine clippings, paint, and found objects.

The assignment was to express a “passion.” I chose this word on purpose. Passion activates emotional intelligence and deeply held values, and when an assignment touches values—not just aesthetics—AI’s limitations become visible in a way learners can feel. When digital outputs and physical materials must coexist, students can witness firsthand what the model can access and what it can’t.

One student attempted to visualize her experience encountering homeless communities while studying abroad. The AI repeatedly generated images of men. But that contradicted her lived reality. In that moment, the class didn’t need a lecture about bias or a technical tutorial about better prompts. The dissonance was visceral. She was forced into a decision: accept the machine’s polished version of “reality,” or reclaim her authorship. She chose the latter. She turned to physical materials, layered magazine clippings and paint, and said something that reframed the entire point of the course: “I need to be in the driver’s seat. I am the artist; the AI is the material.”

That wasn’t a prompting lesson. It was an agency lesson. And once students had that experience, the questions they asked changed. They stopped asking, “What should I prompt AI to make?” and began asking, “What do I want to say—and can AI help me say it?” That shift is the real marker of literacy. The locus of authority moves from the machine to the human. In Phase 3, students carried that same inner grounding into a real client context, designing creative learning experiences for a music-technology startup—where self-awareness sharpened user empathy, and relationship skills strengthened collaboration and decision-making.

Why Agency Matters For Organizations, Not Just Students

This isn’t only a classroom issue. The same competence–agency gap becomes a business risk the moment AI is treated as an efficiency engine—faster content, faster decks, faster planning, faster execution. Efficiency is real, but it’s also a double-edged sword: it automates output while quietly raising the stakes for what can’t be automated—judgment, strategy, alignment, and context-sensitive decision-making. The question I use with teams is the same one I teach students to ask: What are we treating as true here—and what are we assuming because it’s convenient?

When teams lack agency, they fall into what I call useless efficiency: solving the wrong problem 10x faster. The result is illusory solutions—deliverables that look polished and professional, but fail upon contact with reality. Over time, companies accumulate what I describe as AI Dependency Debt: a cycle in which teams keep producing confident-looking work that requires constant correction because it was never anchored in human intent and contextual understanding in the first place.

By contrast, teams with high agency adapt as tools change because their value isn’t in a specific prompt. Their value is in judgment. They bring what I call the human premium: in a world where everyone has access to the same models, competitive advantage comes from the 70%—research, discernment, lived context, taste, and the ability to choose. And in boundary situations—black swan events, cultural shifts, unfamiliar markets—human agency is what recognizes when the machine’s logic no longer applies.

The Strategic Agency Framework (SAF): Four Psychological Capacities

To bridge the gap between human intent and machine execution, I’ve been refining a psychological roadmap I call the Strategic Agency Framework (SAF). At the center of this framework is a simple premise: AI literacy is not just the ability to use a tool. It is the ability to stay oriented while using it. That orientation comes from four psychological capacities—each one protecting authorship in a world where execution is increasingly automated. It’s designed for both students finding their voice and professionals solving complex problems.

1) Self-Awareness — The Intentionality Audit

This is the psychological bedrock. It starts with a rigorous inventory of values, lived experience, and strategic intent—an internal compass that makes evaluation possible. Without it, AI output can only be judged by surface qualities: fluency, coherence, polish. With it, a deeper question becomes available: Does this reflect what is actually meant? The Intentionality Audit is the practice of anchoring in “why” before the first prompt is written, so the tool serves a direction rather than quietly becoming one.

2) Metacognition — The Dissonance Check

Metacognition is the capacity to notice one’s own thinking, especially when something feels subtly off. The Dissonance Check is the trained skill of catching misalignment—when an AI response, no matter how elegant, contradicts internal understanding, contextual knowledge, or lived reality. It is also a form of courage: trusting cognitive appraisal over machine confidence. In a world where language models can sound certain about almost anything, literacy requires the ability to pause, question, and say, not this.

3) Social Awareness — Contextual Integration

AI outputs do not land in empty space. They land inside relationships, cultures, teams, and real human stakes. Contextual Integration is the capacity to ask how machine-generated logic will affect empathy, collaboration, and user-centered outcomes. It protects against the quiet violence of generic solutions—outputs that look “professional” but fail to hold the nuance of the environment they enter. This is where efficiency is balanced with responsibility, and where the social consequences of an answer become part of its evaluation.

4) Creative Sovereignty — The Synthesis Pivot

This is the commitment that completes the framework: remaining the author of meaning. The Synthesis Pivot is the moment where the relationship to AI shifts—away from compliance and toward direction. Here, the 70/30 rule becomes lived practice: AI can support the 30% of execution, but the human must supply the 70% that makes work significant—research, judgment, taste, framing, and the conceptual why. This is what keeps AI as material rather than authority.

Core insight: When AI literacy is grounded in these psychological capacities, authorship stays human. AI stops functioning as a substitute for thought and becomes what it actually is at its best: a powerful material for expression, shaped by clarity, judgment, and lived experience.

Design Principles for Next-Generation AI Literacy Programs

If we want AI literacy that produces authors rather than operators, we need to design programs differently. The first principle is psychology before tools. Self-awareness and metacognition are not “soft skills.” They are prerequisites for safe and meaningful AI use. The second is design for productive friction. We should not optimize for ease. We should create moments where AI fails the learner, because cognitive dissonance is where agency is forged.

A third principle is hybrid materials. The combination of digital and physical makes AI’s boundaries visible and felt, not just explained. A fourth is measure agency, not polish. The assessment question is not “how good does it look?” but “can you articulate the ‘why’ behind your choices, and can you override the machine when needed?” The fifth is build from multiple disciplines. AI literacy is human development. It requires the creative voice of art, the metacognition of psychology, and the structural transformation of learning design.

From Tool to Material

We are moving from an era of tool-using to an era of material-shaping. In the former, we ask what the tool can do for us. In the latter, we ask what we can create with the material—without losing ourselves. If we continue to teach AI literacy as purely technical skill, we risk raising a generation of people who can operate powerful systems while remaining psychologically outsourced to them.

But if we ground AI literacy in agency, we teach something deeper: AI is not the author. We are. The future of AI won’t be decided only by better models or faster processors. It will also be decided by whether humans develop the inner capacity to remain sovereign—to hold meaning steady, even as the world accelerates.

The Leader’s Audit: 5 Questions for Every AI Project

If you’re leading an AI project inside an organization, here are five questions to keep human agency—and therefore quality—at the center of the work.

1) The Human Anchor

What is the one thing about this project that the AI doesn’t know?

This prevents generic, one-size-fits-all solutions.

2) The Dissonance Test

Where did the AI output feel too safe, too easy, or too smooth?

This helps identify “confident average” where innovation disappears.

3) The Bias Audit

Does this reflect our specific audience—or a generalized dataset?

This protects against demographic and cultural misalignment.

4) The 70/30 Split

Which 30% was AI execution, and where is the 70% of human judgment?

This ensures the human remains the author, not the assistant.

5) The Rationale Check

Can you explain the logic behind this solution as if the AI never existed*—in your own words, with your own assumptions named?*

This bridges the competence–agency gap and proves deep understanding.

Note: This SAF framework draws from the curriculum I co-taught at NYU Shanghai. The university holds IP rights to the course materials; the pedagogical insights and psychological framework presented here represent my professional methodology, developed through that experience and continued work. Student details have been minimized to protect privacy.

About the Author

Hi, I'm Zoe. I am a Learning Experience Designer and Behavioral Strategist working at the intersection of learning science, psychology, and human-centered AI product design—with a focus on designing interfaces and experiences that don’t just produce output, but foster self-understanding and durable skill-building. If your team is building AI tools for learning or behavior change and you value both rigor and care, I’m open to conversations about Learning Experience Design, Behavioral Design, and Human-Centered AI product roles.